[译]使用UNIPEN数据库的在线手写识别系统库.

By robot-v1.0

本文链接 https://www.kyfws.com/ai/library-for-online-handwriting-recognition-system-zh/

版权声明 本博客所有文章除特别声明外,均采用 BY-NC-SA 许可协议。转载请注明出处!

- 17 分钟阅读 - 8398 个词 阅读量 0使用UNIPEN数据库的在线手写识别系统库.(译文)

原文地址:https://www.codeproject.com/Articles/363596/Library-for-online-handwriting-recognition-system

原文作者:Vietdungiitb

译文由本站 robot-v1.0 翻译

前言

a library for handwriting recognition system which can recognize 99% to digit or 90% to capital letter+ digit

用于手写识别系统的库,可以识别99%的数字或90%的大写字母+数字

- 下载capital_letters__digit_89_.zip-5.6 MB(*Download capital_letters__digit_89_.zip - 5.6 MB*)

- 下载lowcase_letter_89_.zip-5.6 MB(*Download lowcase_letter_89_.zip - 5.6 MB*)

- 下载numberic_97_.zip-2.3 MB(*Download numberic_97_.zip - 2.3 MB*)

- 下载源-1 MB(Download source - 1 MB)

- 下载演示-114.9 KB(Download demo - 114.9 KB)

介绍(Introduction)

这个项目是从我的愿望开始的,我希望在表面计算机(Windows 8或Android平板电脑)上创建一个小程序,该程序可以识别我5岁的女儿在上面画些什么,并帮助她学习数字和字母字符.我知道与机器学习和模式识别有关的工作非常艰巨.在我女儿完成中学课程之前,该课程可能尚未完成,但这是我有充分的时间花在课余时间上的充分理由.目前,该项目已经取得了好成绩,例如:用于操纵UNIPEN数据库的库,一个用于在运行时动态创建神经网络的库以及一些用于字符分割的类等,这些档案鼓励我继续开发该项目以及与社区分享以帮助大三学生更轻松地学习模式识别(This project has been started from my desire to create a small programon a surface computer (window 8 or Android tablet) which can recognize what my5 years old daughter draws on it and helps her to study numbers and alphabetcharacters. I know it is very hard work relating to machine learning and pattern recognition. The program may not becompleted until my daughter finishes her secondary school program but it isgood reason to me to spend my free time on it. At the present, the project hasachieved several good results such as: alibrary for manipulating UNIPEN database, a library for creating a neuralnetwork dynamically on runtime and some classes for character segmentation etc.These archives have encouraged me to continue to develope the project as well asto share it to community in order to help juniors easier to study patternrecognition) 技术(techniques) 一般而言,尤其是在线手写识别技术.(in general and online handwritingrecognition techniques in particular.)

该演示不仅可以识别数字,还可以通过(The demo can recognize not only digit but also letters on mouse drawing control by) 使用多神经网络(using multi neural network) 同时.(at the same time.)

合作(co)

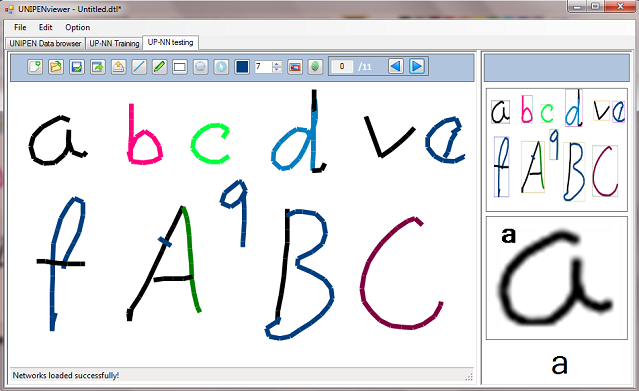

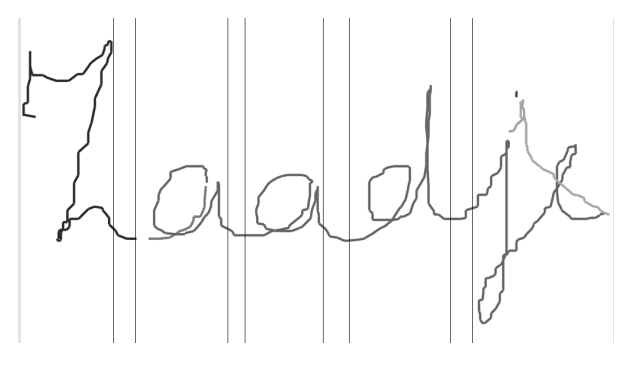

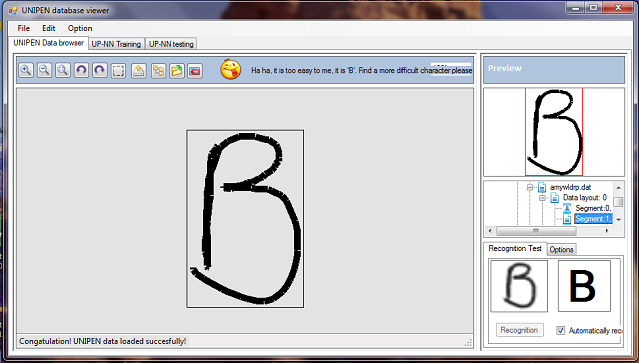

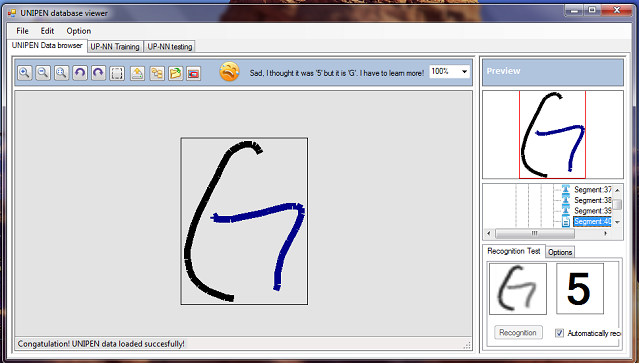

图1a:孤立的字符分割(Picture 1a: Isolated character segmentation)

<o:p>(<o:p>)

<o:p>(<o:p>)

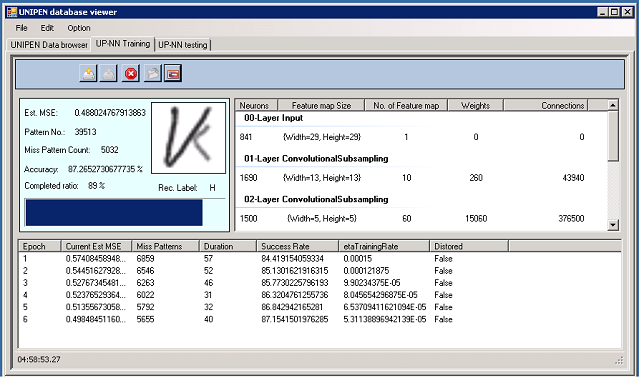

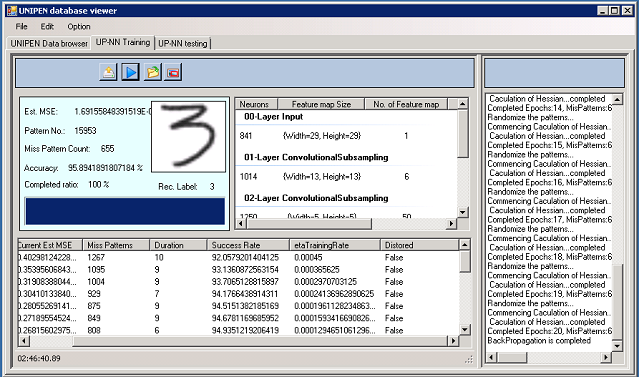

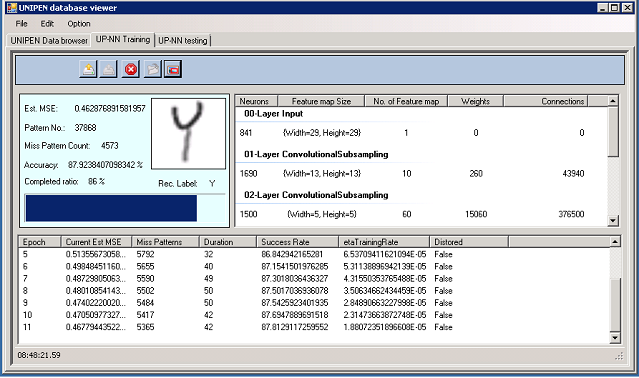

Picture1b:用于大写字母和数字识别的卷积网络(Picture1b: convolution network for capital letters and digits recognition)

背景(Background)

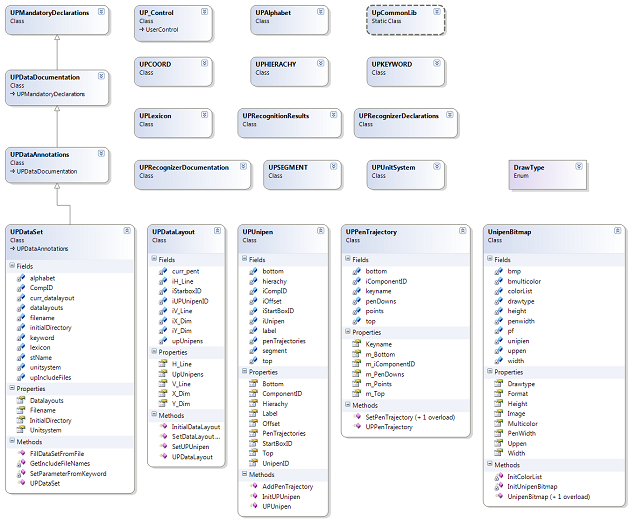

该库分为三个部分:<o:p>(This library is divided to three parts:<o:p>)

第1部分:UNIPEN –在线手写训练数据库库:(Part 1: UNIPEN – online handwriting training database library:)它具有操纵UNIPEN数据库的几种类,UNIPEN数据库是世界上最受欢迎的手写数据库之一.(it has several classes manipulating UNIPEN database, one of the most popular handwriting database over the world.)

<o:p>(<o:p>)

第2部分:卷积神经网络库:(Part 2: Convolution neural network library:)该库是根据神经网络的对象进行组织的,这些对象包括:网络,层,神经元,权重,连接,激活函数,正向传播,反向传播类.对于初中生而言,不仅创建传统的神经网络而且以最小的努力创建卷积网络也很简单.特别是,该库还支持在运行时创建网络.因此,我们可以在程序运行时创建或更改不同的网络.(the library is organized based on neural network’s objects including: network, layer, neuron, weight, connection, activation function, forward propagation, back propagation classes. It is simple to a junior to create not only a traditional neural network but also a convolution network with smallest effort. Especially, the library also supports creating a network on runtime. So we can create or change different networks when the program is running.)

第3部分:图像分割库:(Part 3: Image segmentation library:)它是图像预处理和分割的一些功能.它正在开发过程中.(it is some functions for image pre-processing and segmentation. It is in developing process.)

<o:p>(<o:p>)

<o:p>(<o:p>)

图2:字符分割(Picture 2: Character segmentation)

这些技术已在之前的主题"(These techniques have been introduced in previous topics “) UPV – UNIPEN在线手写识别数据库查看器控件(UPV – UNIPEN online handwriting recognitiondatabase viewer control) “和”(” and ”) 用于C#中手写数字识别的神经网络(Neural Network for Recognition of Handwritten Digits in C#) “.但是,本文是对它们的综合,可以为手写识别系统带来更全面的了解.在本文中,我将重点介绍一种用于将UNIPEN数据获取到识别器输入的方法.用于的卷积网络(”. However, this article is a synthesis of them which can bring a more general view to a handwriting recognition system. In this article I will highlight a method is used to get the UNIPEN data to the input of a recognizer. A convolution network for)大写字母和数字识别(capital letters and numbers recognition)为了说明如何使用该库,也进行了描述.(also is described in order to explain how to use this library.)

UNIPEN及其格式(The UNIPEN and its format)

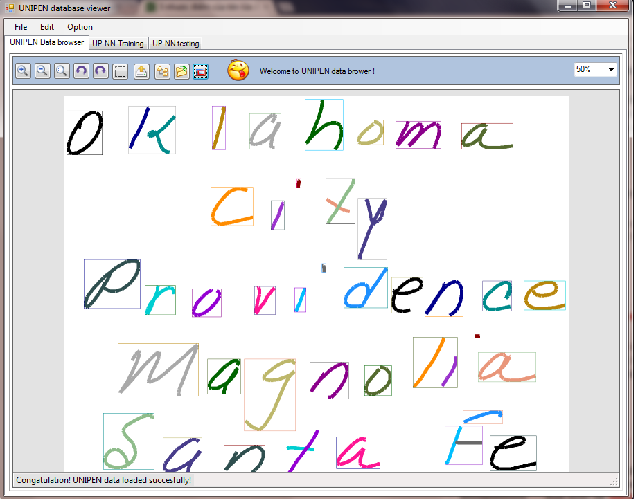

图2:具有大写字母和数字识别功能的UNIPEN数据浏览器.(Picture 2: UNIPEN data browser with function for capital letters and digits recognition.)

经过大量的合作,许多研究机构和行业已经生成了UNIPEN标准和数据库.数据最初由NIST托管,分为两个分布,分别称为Trainset和devset.自1999年以来,国际UNIPEN基金会(iUF)托管数据,目的是维护火车的分布并促进在研究和应用中使用在线手写.在过去的几年中,数十名研究人员使用了该训练集并描述了实验性能结果.许多研究人员已经报告了具有适当识别率的完善研究,但是所有研究人员都使用了特定的数据配置.在大多数情况下,使用某些特定的过程将数据分解为三个子集,以进行训练,测试和验证.因此,尽管使用了相同的数据源,但是由于采用了不同的分解技术,因此无法真正比较识别结果. <o:p>(In a large collaborative effort, a wide number of research institutesand industry have generated the UNIPEN standard and database.Originally hosted by NIST, the data was divided into two distributions, dubbedthe trainset and devset. Since 1999, the International UNIPEN Foundation (iUF)hosts the data, with the goal to safeguard the distribution of the trainset andto promote the use of online handwriting in research and applications. In thelast years, dozens of researchers have used the trainset and describedexperimental performance results. Many researchers have reported wellestablished research with proper recognition rates, but all applied someparticular configuration of the data. In most cases the data were decomposed,using some specific procedure, into three subsets for training, testing andvalidation. Therefore, although the same source of data was used, recognition resultscannot really be compared as different decomposition techniques were employed. <o:p>)

一段时间以来,iUF的目标一直是在其余数据集devset上组织基准测试.尽管devset对UNIPEN的某些原始贡献者可用,但尚未正式发布给广大读者.我没有运气去上班.<o:p>(For some time now, it has been the goal of the iUF to organize abenchmark on the remaining data set, the devset. Although the devset isavailable to some of the original contributors to UNIPEN, it has not officiallybeen released to a broad audience yet. I have been no luck to work onit.<o:p>)

由于UNIPEN的训练集是来自不同研究机构的特定数据集的收集,因此这些数据集将使用某些特定程序进行分解.但是,我的方法略有不同.我试图在这些数据集的结构中找到一些一般要点,以创建一个过程,该过程可以在大多数情况下正确地分解训练集中的所有数据集. <o:p>(Due to UNIPEN trainset is collection of particular datasets fromdifferent research institutes, these datasets aredecomposed using some specific procedure. However, my approach is a little bitdifferent; I tried to find some general points in the structure of thesedatasets to create a procedure which can decompose all datasets in the trainsetcorrectly in most cases. <o:p>)

<o:p>(<o:p>)

火车的组织方式如下:(The trainset is organized as follows:)

UNIPEN格式在(The UNIPEN format is described in) 这里(here) .该格式被认为是笔坐标的序列,带有各种信息,包括分段和标记.笔轨迹被编码为包含.PENDOWN和.PEN UP的分量序列,其中包含笔坐标(例如,在COORD中声明的XY或XY T). .DT指令允许精确计算两个组件之间的经过时间.数据库被分为一个或几个以.START SET开始的数据集.在一个集合中,组件从零开始隐式编号. .SEGMENT指令提供了分段和标记. .SEGMENT使用组件编号来描述句子,单词,字符.使用.HIERARCHY声明分段层次结构(例如,SENTENCE WORD CHARACTER).由于组件是通过该集合中名称和订单号的唯一组合来引用的,因此可以将.SEGMENT与数据本身分开.(. The format is thought of asa sequence of pen coordinates, annotated with various information, including segmentationand labeling. The pen trajectory is encoded as a sequence of components .PENDOWN and .PEN UP, containing pen coordinates (e.g. XY or XY T as declared in.COORD). The instruction .DT permits précising the elapsed time between two components.The database is divided into one or several data sets starting with .START SET.Within a set, components are implicitly numbered, starting from zero. Segmentationand labeling are provided by the .SEGMENT instruction. Component numbers areused by .SEGMENT to delineate sentences, words, characters. A segmentationhierarchy (e.g. SENTENCE WORD CHARACTER) is declared with .HIERARCHY . Becausecomponents are referred by a unique combination of set name and order number in that set, itis possible to separate the .SEGMENT from the data itself.)

<o:p>(<o:p>)

<o:p>(<o:p>)

通常,UNIPEN数据文件的格式具有关键字,这些关键字分为几个组,例如:(In general, the format of a UNIPEN data file has KEYWORDS which aredivided to several groups like:)强制性声明,数据文档,字母,词典,数据布局,单位系统,笔轨迹¸数据注释(Mandatorydeclarations, Data documentation, Alphabet, Lexicon, Data layout, Unit system,Pen trajectory¸ Data annotations).为了获取信息并对这些关键字进行分类,我基于上述组构建了一个类的集合,这些类可以帮助我从数据文件中获取和分类所有必要的信息.(. In order to get the information andcategorize these keywords, I built a collection of classes based on the abovegroups which can help me to get and categorize all necessary information from data file.)

<o:p>(<o:p>)

虽然UNIPEN格式基于KEYWORD,但是它不能按特定顺序修复.我创建了一个类似于存储架的DataSet类,当找到KEYWORD时将对其进行分类并放入对应的位置(Although the UNIPEN format based on KEYWORDbut it not fix in a specific order. I created a DataSet class like a storage racks, when a KEYWORD is found it will be categorized and putto a correspondent)架.通常,每个UNIPEN文件都包含一个或几个数据集.但是,在大多数情况下,文件中有一个DataSet. Mylibrary现在仅关注这种情况.(rack. In the normal, each UNIPEN file containsone or several Datasets. But, in most cases there is a DataSet in a file. Mylibrary now focuses on this case only.)

获得训练模式(笔(Getting training patterns (Pen)轨迹位图(trajectory bitmaps)),使用库非常简单,如下所示:() from trainset using the library is very simple as follows:)

private void btnOpen_Click(object sender, EventArgs e)

{

if (dataProvider.IsDataStop == true)

{

try

{

FolderBrowserDialog fbd = new FolderBrowserDialog();

// Show the FolderBrowserDialog.

DialogResult result = fbd.ShowDialog();

if (result == DialogResult.OK)

{

bool fn = false;

string folderName = fbd.SelectedPath;

Task[] tasks = new Task[2];

isCancel = false;

tasks[0] = Task.Factory.StartNew(() =>

{

dataProvider.IsDataStop = false;

this.Invoke(DelegateAddObject, new object[] { 0, "Getting image training data, please be patient...." });

dataProvider.GetPatternsFromFiles(folderName); //get patterns with default parameters

dataProvider.IsDataStop = true;

if (!isCancel)

{

this.Invoke(DelegateAddObject, new object[] { 1, "Congatulation! Image training data loaded succesfully!" });

dataProvider.Folder.Dispose();

isDatabaseReady = true;

}

else

{

this.Invoke(DelegateAddObject, new object[] { 98, "Sorry! Image training data loaded fail!" });

}

fn = true;

});

tasks[1] = Task.Factory.StartNew(() =>

{

int i = 0;

while (!fn)

{

Thread.Sleep(100);

this.Invoke(DelegateAddObject, new object[] { 99, i });

i++;

if (i >= 100)

i = 0;

}

});

}

}

catch (Exception ex)

{

MessageBox.Show(ex.ToString());

}

}

else

{

DialogResult result = MessageBox.Show("Do you really want to cancel this process?", "Cancel loadding Images", MessageBoxButtons.YesNo);

if (result == DialogResult.Yes)

{

dataProvider.IsDataStop = true;

isCancel = true;

}

}

}

之后,这些模式将成为神经网络的训练数据:(After that, the patterns will be the training data to a neural network:)

private void btTrain_Click(object sender, EventArgs e)

{

if (isDatabaseReady && !isTrainingRuning)

{

TrainingParametersForm form = new TrainingParametersForm();

form.Parameters = nnParameters;

DialogResult result = form.ShowDialog();

if (result == DialogResult.OK)

{

nnParameters = form.Parameters;

ByteImageData[] dt = new ByteImageData[dataProvider.ByteImagePatterns.Count];

dataProvider.ByteImagePatterns.CopyTo(dt);

nnParameters.RealPatternSize = dataProvider.PatternSize;

if (network == null)

{

CreateNetwork(); //create network for training

NetworkInformation();

}

var ntraining = new Neurons.NNTrainPatterns(network, dt, nnParameters, true, this);

tokenSource = new CancellationTokenSource();

token = tokenSource.Token;

this.btTrain.Image = global::NNControl.Properties.Resources.Stop_sign;

this.btLoad.Enabled = false;

this.btnOpen.Enabled = false;

maintask = Task.Factory.StartNew(() =>

{

if (stopwatch.IsRunning)

{

// Stop the timer; show the start and reset buttons.

stopwatch.Stop();

}

else

{

// Start the timer; show the stop and lap buttons.

stopwatch.Reset();

stopwatch.Start();

}

isTrainingRuning = true;

ntraining.BackpropagationThread(token);

if (token.IsCancellationRequested)

{

String s = String.Format("BackPropagation is canceled");

this.Invoke(this.DelegateAddObject, new Object[] { 4, s });

token.ThrowIfCancellationRequested();

}

},token);

}

}

else

{

tokenSource.Cancel();

}

}

卷积神经网络(Convolutionneural network)

卷积网络的理论已在我的上一篇文章和Codeproject的其他文章中进行了描述.在本文中,我将只着重于与以前的程序相比该库中的开发.(Theory of convolution network has been described inmy previous article and several others on Codeproject. In this article, I will only focus onwhat development in this library compares to the previous program.)

<o:p>(<o:p>)

该库已完全重写,以适应我当前的要求:对于不需要深入了解神经网络的初级用户来说,它易于使用;简单地创建一个神经网络,无需更改代码即可更改网络参数,尤其是在运行时交换不同网络的能力. <o:p>(This library has been re-writtencompletely to fit my current requirement: easy to use to juniors who do notneed a deep knowledge on neural network; creating a neural network simply, changing network parameters without changing code and especially is the capacity of exchanging different networks on runtime. <o:p>)

上一个程序中的CreateNetwork函数:(CreateNetwork function in previous program:)

private bool CreateNNNetWork(NeuralNetwork network)

{

NNLayer pLayer;

int ii, jj, kk;

int icNeurons = 0;

int icWeights = 0;

double initWeight;

String sLabel;

var m_rdm = new Random();

// layer zero, the input layer.

// Create neurons: exactly the same number of neurons as the input

// vector of 29x29=841 pixels, and no weights/connections

pLayer = new NNLayer("Layer00", null);

network.m_Layers.Add(pLayer);

for (ii = 0; ii < 841; ii++)

{

sLabel = String.Format("Layer00_Neuro{0}_Num{1}", ii, icNeurons);

pLayer.m_Neurons.Add(new NNNeuron(sLabel));

icNeurons++;

}

//double UNIFORM_PLUS_MINUS_ONE= (double)(2.0 * m_rdm.Next())/Constants.RAND_MAX - 1.0 ;

// layer one:

// This layer is a convolutional layer that has 6 feature maps. Each feature

// map is 13x13, and each unit in the feature maps is a 5x5 convolutional kernel

// of the input layer.

// So, there are 13x13x6 = 1014 neurons, (5x5+1)x6 = 156 weights

pLayer = new NNLayer("Layer01", pLayer);

network.m_Layers.Add(pLayer);

for (ii = 0; ii < 1014; ii++)

{

sLabel = String.Format("Layer01_Neuron{0}_Num{1}", ii, icNeurons);

pLayer.m_Neurons.Add(new NNNeuron(sLabel));

icNeurons++;

}

for (ii = 0; ii < 156; ii++)

{

sLabel = String.Format("Layer01_Weigh{0}_Num{1}", ii, icWeights);

initWeight = 0.05 * (2.0 * m_rdm.NextDouble() - 1.0);

pLayer.m_Weights.Add(new NNWeight(sLabel, initWeight));

}

// interconnections with previous layer: this is difficult

// The previous layer is a top-down bitmap image that has been padded to size 29x29

// Each neuron in this layer is connected to a 5x5 kernel in its feature map, which

// is also a top-down bitmap of size 13x13. We move the kernel by TWO pixels, i.e., we

// skip every other pixel in the input image

int[] kernelTemplate = new int[25] {

29, 30, 31, 32, 33,

58, 59, 60, 61, 62,

87, 88, 89, 90, 91,

116,117,118,119,120 };

0, 1, 2, 3, 4,

int iNumWeight;

int fm;

for (fm = 0; fm < 6; fm++)

{

for (ii = 0; ii < 13; ii++)

{

for (jj = 0; jj < 13; jj++)

{

iNumWeight = fm * 26; // 26 is the number of weights per feature map

NNNeuron n = pLayer.m_Neurons[jj + ii * 13 + fm * 169];

n.AddConnection((uint)MyDefinations.ULONG_MAX, (uint)iNumWeight++); // bias weight

for (kk = 0; kk < 25; kk++)

{

// note: max val of index == 840, corresponding to 841 neurons in prev layer

n.AddConnection((uint)(2 * jj + 58 * ii + kernelTemplate[kk]), (uint)iNumWeight++);

}

}

}

}

// layer two:

// This layer is a convolutional layer that has 50 feature maps. Each feature

// map is 5x5, and each unit in the feature maps is a 5x5 convolutional kernel

// of corresponding areas of all 6 of the previous layers, each of which is a 13x13 feature map

// So, there are 5x5x50 = 1250 neurons, (5x5+1)x6x50 = 7800 weights

pLayer = new NNLayer("Layer02", pLayer);

network.m_Layers.Add(pLayer);

for (ii = 0; ii < 1250; ii++)

{

sLabel = String.Format("Layer02_Neuron{0}_Num{1}", ii, icNeurons);

pLayer.m_Neurons.Add(new NNNeuron(sLabel));

icNeurons++;

}

for (ii = 0; ii < 7800; ii++)

{

sLabel = String.Format("Layer02_Weight{0}_Num{1}", ii, icWeights);

initWeight = 0.05 * (2.0 * m_rdm.NextDouble() - 1.0);

pLayer.m_Weights.Add(new NNWeight(sLabel, initWeight));

}

// Interconnections with previous layer: this is difficult

// Each feature map in the previous layer is a top-down bitmap image whose size

// is 13x13, and there are 6 such feature maps. Each neuron in one 5x5 feature map of this

// layer is connected to a 5x5 kernel positioned correspondingly in all 6 parent

// feature maps, and there are individual weights for the six different 5x5 kernels. As

// before, we move the kernel by TWO pixels, i.e., we

// skip every other pixel in the input image. The result is 50 different 5x5 top-down bitmap

// feature maps

int[] kernelTemplate2 = new int[25]{

0, 1, 2, 3, 4,

13, 14, 15, 16, 17,

26, 27, 28, 29, 30,

39, 40, 41, 42, 43,

52, 53, 54, 55, 56 };

for (fm = 0; fm < 50; fm++)

{

for (ii = 0; ii < 5; ii++)

{

for (jj = 0; jj < 5; jj++)

{

iNumWeight = fm * 156; // 26 is the number of weights per feature map

NNNeuron n = pLayer.m_Neurons[jj + ii * 5 + fm * 25];

n.AddConnection((uint)MyDefinations.ULONG_MAX, (uint)iNumWeight++); // bias weight

for (kk = 0; kk < 25; kk++)

{

// note: max val of index == 1013, corresponding to 1014 neurons in prev layer

n.AddConnection((uint)(2 * jj + 26 * ii + kernelTemplate2[kk]), (uint)iNumWeight++);

n.AddConnection((uint)(169 + 2 * jj + 26 * ii + kernelTemplate2[kk]), (uint)iNumWeight++);

n.AddConnection((uint)(338 + 2 * jj + 26 * ii + kernelTemplate2[kk]), (uint)iNumWeight++);

n.AddConnection((uint)(507 + 2 * jj + 26 * ii + kernelTemplate2[kk]), (uint)iNumWeight++);

n.AddConnection((uint)(676 + 2 * jj + 26 * ii + kernelTemplate2[kk]), (uint)iNumWeight++);

n.AddConnection((uint)(845 + 2 * jj + 26 * ii + kernelTemplate2[kk]), (uint)iNumWeight++);

}

}

}

}

// layer three:

// This layer is a fully-connected layer with 100 units. Since it is fully-connected,

// each of the 100 neurons in the layer is connected to all 1250 neurons in

// the previous layer.

// So, there are 100 neurons and 100*(1250+1)=125100 weights

pLayer = new NNLayer("Layer03", pLayer);

network.m_Layers.Add(pLayer);

for (ii = 0; ii < 100; ii++)

{

sLabel = String.Format("Layer03_Neuron{0}_Num{1}", ii, icNeurons);

pLayer.m_Neurons.Add(new NNNeuron(sLabel));

icNeurons++;

}

for (ii = 0; ii < 125100; ii++)

{

sLabel = String.Format("Layer03_Weight{0}_Num{1}", ii, icWeights);

initWeight = 0.05 * (2.0 * m_rdm.NextDouble() - 1.0);

pLayer.m_Weights.Add(new NNWeight(sLabel, initWeight));

}

// Interconnections with previous layer: fully-connected

iNumWeight = 0; // weights are not shared in this layer

for (fm = 0; fm < 100; fm++)

{

NNNeuron n = pLayer.m_Neurons[fm];

n.AddConnection((uint)MyDefinations.ULONG_MAX, (uint)iNumWeight++); // bias weight

for (ii = 0; ii < 1250; ii++)

{

n.AddConnection((uint)ii, (uint)iNumWeight++);

}

}

// layer four, the final (output) layer:

// This layer is a fully-connected layer with 10 units. Since it is fully-connected,

// each of the 10 neurons in the layer is connected to all 100 neurons in

// the previous layer.

// So, there are 10 neurons and 10*(100+1)=1010 weights

pLayer = new NNLayer("Layer04", pLayer);

network.m_Layers.Add(pLayer);

for (ii = 0; ii < 10; ii++)

{

sLabel = String.Format("Layer04_Neuron{0}_Num{1}", ii, icNeurons);

pLayer.m_Neurons.Add(new NNNeuron(sLabel));

icNeurons++;

}

for (ii = 0; ii < 1010; ii++)

{

sLabel = String.Format("Layer04_Weight{0}_Num{1}", ii, icWeights);

initWeight = 0.05 * (2.0 * m_rdm.NextDouble() - 1.0);

pLayer.m_Weights.Add(new NNWeight(sLabel, initWeight));

}

// Interconnections with previous layer: fully-connected

iNumWeight = 0; // weights are not shared in this layer

for (fm = 0; fm < 10; fm++)

{

var n = pLayer.m_Neurons[fm];

n.AddConnection((uint)MyDefinations.ULONG_MAX, (uint)iNumWeight++); // bias weight

for (ii = 0; ii < 100; ii++)

{

n.AddConnection((uint)ii, (uint)iNumWeight++);

}

}

return true;

}

<o:p>(<o:p>)

使用此库的当前演示中的CreateNetwork函数:(CreateNetwork function in current demo using this library:)

private List<Char> Letters2 = new List<Char>(36) { 'A', 'B', 'C', 'D', 'E', 'F', 'G', 'H',

'I', 'J', 'K', 'L', 'M', 'N', 'O', 'P', 'Q', 'R', 'S', 'T', 'U', 'V', 'W', 'X', 'Y', 'Z',

'0', '1', '2', '3', '4', '5', '6', '7', '8', '9' };

private List<Char> Letters = new List<Char>(62) { 'A', 'B', 'C', 'D', 'E', 'F', 'G', 'H',

'I', 'J', 'K', 'L', 'M', 'N', 'O', 'P', 'Q', 'R', 'S', 'T', 'U', 'V', 'W', 'X', 'Y', 'Z',

'a', 'b', 'c', 'd', 'e', 'f', 'g', 'h', 'i', 'j', 'k', 'l', 'm', 'n', 'o', 'p', 'q', 'r',

's', 't', 'u', 'v', 'w', 'x', 'y', 'z', '0', '1', '2', '3', '4', '5', '6', '7', '8', '9' };

private List<Char> Letters1 = new List<Char>(10) { '0', '1', '2', '3', '4', '5', '6', '7', '8', '9' };

void CreateNetwork1()

{

network = new ConvolutionNetwork();

//layer 0: inputlayer

network.Layers = new NNLayer[5];

network.LayerCount = 5;

NNLayer layer = new NNLayer("00-Layer Input", null, new Size(29, 29), 1, 5);

network.InputDesignedPatternSize = new Size(29, 29);

layer.Initialize();

network.Layers[0] = layer;

layer = new NNLayer("01-Layer ConvolutionalSubsampling", layer, new Size(13, 13), 6, 5);

layer.Initialize();

network.Layers[1] = layer;

layer = new NNLayer("02-Layer ConvolutionalSubsampling", layer, new Size(5, 5), 50, 5);

layer.Initialize();

network.Layers[2] = layer;

layer = new NNLayer("03-Layer FullConnected", layer, new Size(1, 100), 1, 5);

layer.Initialize();

network.Layers[3] = layer;

layer = new NNLayer("04-Layer FullConnected", layer, new Size(1, Letters1.Count), 1, 5);

layer.Initialize();

network.Layers[4] = layer;

network.TagetOutputs = Letters1;

}

<o:p>(<o:p>)

在当前版本中,如果要创建一个不仅可以识别10位数字而且可以识别字母的网络(总共62个输出).我只需添加其他一些层并更改一些参数,如下所示:(In the current version, if I want to create a network which can recognize not only 10 digits but also alphabets (62 outputs total). I simply add some other layers and change some parameters as follows:)

void CreateNetwork()

<pre> {

network = new ConvolutionNetwork();

//layer 0: inputlayer

network.Layers = new NNLayer[6];

network.LayerCount = 6;

NNLayer layer = new NNLayer("00-Layer Input", null, new Size(29, 29), 1, 5);

network.InputDesignedPatternSize = new Size(29, 29);

layer.Initialize();

network.Layers[0] = layer;

layer = new NNLayer("01-Layer ConvolutionalSubsampling", layer, new Size(13, 13), 10, 5);

layer.Initialize();

network.Layers[1] = layer;

layer = new NNLayer("02-Layer ConvolutionalSubsampling", layer, new Size(5, 5), 60, 5);

layer.Initialize();

network.Layers[2] = layer;

layer = new NNLayer("03-Layer FullConnected", layer, new Size(1, 300), 1, 5);

layer.Initialize();

network.Layers[3] = layer;

layer = new NNLayer("04-Layer FullConnected", layer, new Size(1, 200), 1, 5);

layer.Initialize();

network.Layers[4] = layer;

layer = new NNLayer("05-Layer FullConnected", layer, new Size(1, Letters.Count), 1, 5);

layer.Initialize();

network.Layers[5] = layer;

network.TagetOutputs = Letters;

}

我们可以更改所有网络参数,例如:层数,输入模式大小,特征图数量,卷积网络中的内核大小,层中神经元数量,输出数量等.拥有最适合我们的网络.更改网络不会影响(We can change all network parameters such as: number of layers, input pattern size, number of feature map, kernel size in convolution network, number of neuron in a layer, number of output…etc. to have the best network for us. Changing network is not influent to)前向传播和反向传播类.(forward propagation or back propagationclasses.)

<o:p>(<o:p>)

实验库:<o:p>(Experimentwith the library:<o:p>)

该演示程序展示了该库的两个主要功能:UNIPEN数据浏览器和卷积神经网络训练和测试.当然,输入的数据是UNIPEN火车,可以在网站上下载:(The demo program presents two main functions of the library: UNIPEN data browser and Convolution neural network training and testing. Of course the in put data is UNIPEN trainset which can be downloaded on the website:) http://unipen.nici.kun.nl/(http://unipen.nici.kun.nl/) .为了使演示程序能够正确运行,必须将trainset文件夹重命名为(. In order to the demo program can run correctly, the trainset folder have to be renamed to)UnipenData(UnipenData).(.)

图4:UNIPEN数据浏览器(Picture 4: UNIPEN data browser)

我们只需在UnipenData中选择Data文件夹即可浏览所有数据.通过加载网络参数文件,可以激活识别功能.取决于网络文件,程序只能识别数字,也可以识别所有大写字母加数字.(We can simply select Data folder in UnipenData to browse all data. The recognition function can be active by loading a network parameters file. Depend on the network file the program can recognize digits only or all capital letters plus digits.)

图5:卷积网络训练(Picture 5: Convolution network training)

默认的卷积网络是62个输出网络.您可以通过加载附件的网络参数文件来更改网络.为了获得正确的训练数据,例如到36输出网络(大写字母和数字的网络),您应该删除Data文件夹中除1a,1b(数字和大写字母的文件夹)以外的所有文件夹.(The default convolution network is 62 outputs network. You can change the network by loading the attached network parameters files. In order to get corrected training data, for example to a 36 outputs network (a network for capital letters and digits) you should delete all folders in the Data folder except 1a,1b (a folder of digit and capital letters).)

在我的实验中,结果比较好,大写字母和数字的准确度为88%,数字的准确性为97%.我无法对62个输出网络进行实验,因为当我训练网络时,我的笔记本电脑快要烧坏了.(In my experiment, results are rather good with 88% accuracy to the collection of capital letter and digits or 97% to digits. I can not to do the experiment to 62 outputs network because my laptop was nearly burn when I trained the network.)

兴趣点(Points of Interest)

作为人类的大脑,人工智能系统无法创建内部具有数十亿个神经元的独特神经网络来解决不同的问题.它将包含几个可以解决单独问题的小型网络.我的图书馆有这种能力.因此,我确实希望它不仅可以应用于我女儿的程序,而且有一天可以应用于真实系统.(As a human brain, an artificial intelligent system can not create a unique neural network with billions neurons inside to solve different problems. It will contains several small networks which can solve seperated problems. My library has this capacity. So I do hope that it can be applied not only to my daughter’s program but also to a real system in some day.)

目前,该项目是我大学赞助的一项年度小型研究.我正在寻找捐款或奖学金以继续进行.如果有人对此项目感兴趣并能帮助其发展,将不胜感激.(At the moment, this project is sponsored by my university as an annual small research. I am finding a donation or scholarship to continue it. It will be highly appreciated if someone interested in this project and can help it more developed.)

欢迎对我的文章进行投票和评论.(The vote and comment to my article is welcome…)

历史(History)

库版本:1.0初始代码(Library version: 1.0 initial code) 版本1.01:修复错误(Unipen库可以正确读取NicIcon,UJI-Penchar文件),向Unipen库添加字符分割功能,修复神经元库中的错误.以前的网络参数与当前版本不兼容.如果有人下载了1.0版演示,请重新下载所有文件.(Version 1.01: fix bugs (Unipen library can read NicIcon, UJI-Penchar files correctly), add character segmentation functions to Unipen library, fix bugs in neuron library. Previous network parameters are not compatible to current version. If anybody downloaded version 1.0 demo, please download all file again.)

可以识别鼠标绘图控件上的62个字符的2.0版(图片1)将发布在以后的文章”(Version 2.0 which can recognize 62 characters on a mouse drawing control (picture 1) will be posted on comming article “) 使用多神经网络的大模式识别系统(Large pattern recognition system using multi neural networks) “(")

||TuDienTiengViet.Net(TuDienTiengViet.Net)|

[

| ]() |

|---|

||&amp; amp; lt;/td&amp; gt;&amp; amp; lt;/tr&amp; amp; gt; amp; lt; tr align =&amp; amp; quot; center&amp; amp; amp; quot; ;&lt; td width =&amp; amp; amp; quot; 100%&amp; amp; amp; amp; quot; height =&amp; amp; quot; 20&amp; amp; quot;©2007&amp; amp; lt; a href =&amp; amp; quot; http://www.tudientiengviet.net& quot; ; target =&amp; amp; quot; _blank&amp; amp; gt;&amp; lt; b&amp; amp; amp; Open Vietnam Dictionary Project&amp; lt;/b&amp; amp; gt;/amp; amp; amp; amp; amp; lt;/td&amp; gt; amp; amp; amp; lt;/tr&amp; amp; gt; amp; amp; lt;/tbody&amp; amp; amp; amp; gt; &lt;/table&amp; gt;&amp; lt;/fieldset&amp; gt;&amp; amp; lt/td&amp; gt;&amp; amp; amp; lt//tr&amp; amp; &lt;/tbody&amp; gt;&amp; amp; lt;/table&amp; gt;&amp; lt;/td&amp; gt;&amp; amp; lt/tr&amp; &amp; amp; amp; amp; lt;/body&amp; amp; amp; amp; lt;/table&amp; amp; amp; amp; amp; amp; lt;/div&amp; amp; amp; amp; amp; lt;/正文&amp; amp; amp; lt;/html&amp; amp; gt; (&amp;lt;/td&amp;gt;&amp;lt;/tr&amp;gt;&amp;lt;tr align=&amp;quot;center&amp;quot;&amp;gt;&amp;lt;td width=&amp;quot;100%&amp;quot; height=&amp;quot;20&amp;quot;&amp;gt;©2007 &amp;lt;a href=&amp;quot;http://www.tudientiengviet.net&amp;quot; target=&amp;quot;_blank&amp;quot;&amp;gt;&amp;lt;b&amp;gt;Open Vietnamese Dictionaries Project&amp;lt;/b&amp;gt;&amp;lt;/a&amp;gt;&amp;lt;/td&amp;gt;&amp;lt;/tr&amp;gt;&amp;lt;/tbody&amp;gt;&amp;lt;/table&amp;gt;&amp;lt;/fieldset&amp;gt;&amp;lt;/td&amp;gt;&amp;lt;/tr&amp;gt;&amp;lt;/tbody&amp;gt;&amp;lt;/table&amp;gt;&amp;lt;/td&amp;gt;&amp;lt;/tr&amp;gt;&amp;lt;/tbody&amp;gt;&amp;lt;/table&amp;gt;&amp;lt;/div&amp;gt;&amp;lt;/body&amp;gt;&amp;lt;/html&amp;gt;)| |-|

|

|

||TuDienTiengViet.Net(TuDienTiengViet.Net)|

[

| ]() |

|---|

||&lt;/td&gt;&lt;/tr&gt;&lt; tr align ="中心"> td宽度=” 100%”. height =" 20">©2007&lt; a href =" http://www.tudientiengviet.net" target =" _ blank">“打开越南语词典项目”//b>//a/td/lt/tr/tbody/tbody/表/fieldset &lt;/td&gt;/tr&lt;/tbody>&lt;/table&gt;/td&lt;/tr&lt;/tbody&lt;/table&lt;/div&div; (</td></tr><tr align="center"><td width="100%" height="20">©2007 <a href="http://www.tudientiengviet.net" target="_blank"><b>Open Vietnamese Dictionaries Project</b></a></td></tr></tbody></table></fieldset></td></tr></tbody></table></td></tr></tbody></table></div>)| |-|

|

|

||TuDienTiengViet.Net(TuDienTiengViet.Net)|

[

| ]() |

|---|

||()| |-| |©2007 打开越南语词典项目(Open Vietnamese Dictionaries Project) |

|

|

||TuDienTiengViet.Net(TuDienTiengViet.Net)|

[

| ]() |

|---|

|| ©2007 打开越南语词典项目 (©2007 Open Vietnamese Dictionaries Project)| |-|

|

|

||TuDienTiengViet.Net(TuDienTiengViet.Net)|

[

| ]() |

|---|

|| ©2007 打开越南语词典项目 (©2007 Open Vietnamese Dictionaries Project)| |-|

|

|

||TuDienTiengViet.Net(TuDienTiengViet.Net)|

[

| ]() |

|---|

||()| |-| |©2007 打开越南语词典项目(Open Vietnamese Dictionaries Project) |

|

|

||TuDienTiengViet.Net(TuDienTiengViet.Net)|

[

| ]() |

|---|

||()| |-| |©2007 打开越南语词典项目(Open Vietnamese Dictionaries Project) |

|

|

许可

本文以及所有相关的源代码和文件均已获得The Code Project Open License (CPOL)的许可。

C#4.0 C# Win7 Windows Dev machine-learning 新闻 翻译