[译]使用CNTK和C#进行线性回归

By robot-v1.0

本文链接 https://www.kyfws.com/ai/linear-regression-with-cntk-and-csharp-zh/

版权声明 本博客所有文章除特别声明外,均采用 BY-NC-SA 许可协议。转载请注明出处!

- 7 分钟阅读 - 3207 个词 阅读量 0使用CNTK和C#进行线性回归(译文)

原文地址:https://www.codeproject.com/Articles/1257176/Linear-Regression-with-CNTK-and-Csharp

原文作者:Bahrudin Hrnjica

译文由本站 robot-v1.0 翻译

前言

Linear regression with CNTK and C#

使用CNTK和C#进行线性回归

- 下载源代码(外部链接)(Download source code (external link))

CNTK是Microsoft的深度学习工具,用于训练非常大型和复杂的神经网络模型.但是,您可以将CNTK用于其他各种目的.在以前的一些文章中,我们已经看到了如何使用CNTK进行矩阵乘法,以便计算数据集上的描述性统计参数.(CNTK is Microsoft’s deep learning tool for training very large and complex neural network models. However, you can use CNTK for various other purposes. In some of the previous posts, we have seen how to use CNTK to perform matrix multiplication, in order to calculate descriptive statistics parameters on data set.)

在此博客文章中,我们将实现简单的线性回归模型LR.该模型仅包含一个神经元.该模型还包含偏差参数,因此,总体而言,线性回归只有两个参数:(In this blog post, we are going to implement simple linear regression model, LR. The model contains only one neuron. The model also contains bias parameters, so in total, the linear regression has only two parameters:)

w和(and)b.(.)

下图显示了LR模型:(The image below shows LR model:)

我们使用CNTK解决如此简单的任务的原因非常简单.通过学习像这样的简单模型,我们可以看到CNTK库是如何工作的,并可以看到CNTK中一些不太重要的动作.(The reason why we use the CNTK to solve such a simple task is very straightforward. Learning on simple models like this one, we can see how the CNTK library works, and see some of the not-so-trivial actions in CNTK.)

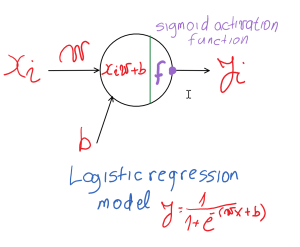

通过添加激活函数,可以将上面显示的模型轻松扩展为逻辑回归模型.除了表示没有激活函数的神经网络配置的线性回归外,逻辑回归是包括激活函数的最简单的神经网络配置.(The model shown above can be easily extend to logistic regression model, by adding activation function. Besides the linear regression which represent the neural network configuration without activation function, the Logistic Regression is the simplest neural network configuration which includes activation function.)

下图显示了逻辑回归模型:(The following image shows logistic regression model:)

如果您想了解有关如何使用CNTK创建Logistic回归的更多信息,请参见此官方演示(In case you want to see more information about how to create Logistic Regression with CNTK, you can see this official demo) 例.(example.)

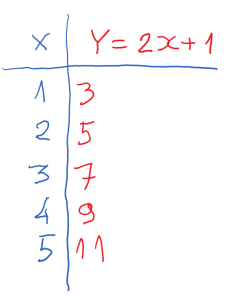

现在,我们对神经网络模型做了一些介绍,我们可以从定义数据集开始.假设我们有一个简单的数据集,代表简单的线性函数(Now that we made some introduction to the neural network models, we can start by defining the data set. Assume we have a simple data set which represents the simple linear function)

.下表显示了生成的数据集:(. The generated data set is shown in the following table:)

我们已经知道呈现数据集的线性回归参数为:(We already know that the linear regression parameters for presented data set are:)

和(and)

,因此我们希望使用CNTK库来获取这些值,或者至少获取与它们非常接近的参数值.(, so we want to engage the CNTK library in order to get those values, or at least parameter values which are very close to them.)

关于如何使用CNTK开发LR模型的所有任务可以分几个步骤进行描述:(All task about how the develop LR model by using CNTK can be described in several steps:)

第1步(Step 1)

在Visual Studio中创建C#控制台应用程序,将当前体系结构更改为(Create C# Console application in Visual Studio, change the current architecture to)

,并添加最新的"(, and add the latest “)

CNTK.GPU “解决方案中的NuGet软件包.下图显示了在Visual Studio中执行的那些操作.(“ NuGet package in the solution. The following image shows those actions performed in Visual Studio.)

第2步(Step 2)

通过添加两个变量开始编写代码:(Start writing code by adding two variables:)

–功能和标签(– feature, and label)

.定义变量后,首先通过创建批处理来定义训练数据集.下面的代码片段显示了如何创建变量和批处理,以及如何开始编写基于CNTK的C#代码.(. Once the variables are defined, start with defining the training data set by creating batch. The following code snippet shows how to create variables and batch, as well as how to start writing CNTK based C# code.)

首先,我们需要添加一些(First, we need to add some) using 语句,并定义将要进行计算的设备.通常,如果计算机包含NVIDIA兼容显卡,我们可以定义CPU或GPU.因此,演示从以下代码片段开始:(statements, and define the device where computation will be happen. Usually, we can define CPU or GPU in case the machine contains NVIDIA compatible graphics card. So the demo starts with the following code snippet:)

using System;

using System.Linq;

using System.Collections.Generic;

using CNTK;

namespace LR_CNTK_Demo

{

class Program

{

static void Main(string[] args)

{

//Step 1: Create some Demo helpers

Console.Title = "Linear Regression with CNTK!";

Console.WriteLine("#### Linear Regression with CNTK! ####");

Console.WriteLine("");

//define device

var device = DeviceDescriptor.UseDefaultDevice();

现在定义两个变量,并在上表中显示数据集:(Now define two variables, and data set presented in the previous table:)

//Step 2: define values, and variables

Variable x = Variable.InputVariable(new int[] { 1 }, DataType.Float, "input");

Variable y = Variable.InputVariable(new int[] { 1 }, DataType.Float, "output");

//Step 2: define training data set from table above

var xValues = Value.CreateBatch(new NDShape(1, 1), new float[] { 1f, 2f, 3f, 4f, 5f }, device);

var yValues = Value.CreateBatch(new NDShape(1, 1), new float[] { 3f, 5f, 7f, 9f, 11f }, device);

第三步(Step 3)

通过传递输入变量和用于计算的设备来创建线性回归网络模型.正如我们已经讨论的那样,该模型由一个神经元和一个偏差参数组成.以下方法实现了LR网络模型:(Create a linear regression network model, by passing input variable and device for computation. As we already discussed, the model consists of one neuron and one bias parameter. The following method implements LR network model:)

private static Function createLRModel(Variable x, DeviceDescriptor device)

{

//initializer for parameters

var initV = CNTKLib.GlorotUniformInitializer(1.0, 1, 0, 1);

//bias

var b = new Parameter(new NDShape(1,1), DataType.Float, initV, device, "b"); ;

//weights

var W = new Parameter(new NDShape(2, 1), DataType.Float, initV, device, "w");

//matrix product

var Wx = CNTKLib.Times(W, x, "wx");

//layer

var l = CNTKLib.Plus(b, Wx, "wx_b");

return l;

}

首先,我们创建初始化程序,它将初始化网络参数的启动值.然后,我们定义偏差和权重参数,并以线性模型的形式将其加入”(First, we create initializer, which will initialize startup values of network parameters. Then, we define bias and weight parameters, and join them in the form of linear model “)

“,并返回为(”, and return as)

Function 类型.的(type. The) createModel 函数在(function is called in the) main 方法.创建模型后,我们可以对其进行检查,并证明模型中只有两个参数.以下代码创建线性回归模型,并打印模型参数:(method. Once the model is created, we can exam it, and prove there are only two parameters in the model. The following code creates the Linear Regression model, and print model parameters:)

//Step 3: create linear regression model

var lr = createLRModel(x, device);

//Network model contains only two parameters b and w, so we query

//the model in order to get parameter values

var paramValues = lr.Inputs.Where(z => z.IsParameter).ToList();

var totalParameters = paramValues.Sum(c => c.Shape.TotalSize);

Console.WriteLine($"LRM has {totalParameters} params,

{paramValues[0].Name} and {paramValues[1].Name}.");

在前面的代码中,我们已经看到了如何从模型中提取参数.一旦有了参数,就可以更改其值,或仅打印这些值以进行进一步分析.(In the previous code, we have seen how to extract parameters from the model. Once we have parameters, we can change its values, or just print those values for further analysis.)

第4步(Step 4)

创造(Create) Trainer ,它将用于训练网络参数,(, which will be used to train network parameters,) w 和(and) b .以下代码段显示了(. The following code snippet shows implementation of) Trainer 方法.(method.)

public Trainer createTrainer(Function network, Variable target)

{

//learning rate

var lrate = 0.082;

var lr = new TrainingParameterScheduleDouble(lrate);

//network parameters

var zParams = new ParameterVector(network.Parameters().ToList());

//create loss and eval

Function loss = CNTKLib.SquaredError(network, target);

Function eval = CNTKLib.SquaredError(network, target);

//learners

//

var llr = new List();

var msgd = Learner.SGDLearner(network.Parameters(), lr,l);

llr.Add(msgd);

//trainer

var trainer = Trainer.CreateTrainer(network, loss, eval, llr);

//

return trainer;

}

首先,我们定义了主要神经网络参数的学习率.然后,我们创建(First, we defined learning rate of the main neural network parameter. Then, we create) Loss 和(and) Evaluation 功能.使用这些参数,我们可以创建SGD学习器.实例化SGD学习者对象后,可通过调用来创建培训者(functions. With those parameters, we can create SGD learner. Once the SGD learner object is instantiated, the trainer is created by calling) CreateTrainer static CNTK方法,并将其作为函数返回进一步传递.方法(CNTK method, and passed it further as function return. The method) createTrainer 被称为(is called in the) main 方法:(method:)

//Step 4: create trainer

var trainer = createTrainer(lr, y);

第5步(Step 5)

培训过程:一旦定义了变量,数据集,网络模型和培训师,就可以开始培训过程.(Training process: Once the variables, data set, network model and trainer are defined, the training process can be started.)

//Ştep 5: training

for (int i = 1; i <= 200; i++)

{

var d = new Dictionary();

d.Add(x, xValues);

d.Add(y, yValues);

//

trainer.TrainMinibatch(d, true, device);

//

var loss = trainer.PreviousMinibatchLossAverage();

var eval = trainer.PreviousMinibatchEvaluationAverage();

//

if (i % 20 == 0)

Console.WriteLine($"It={i}, Loss={loss}, Eval={eval}");

if(i==200)

{

//print weights

var b0_name = paramValues[0].Name;

var b0 = new Value(paramValues[0].GetValue()).GetDenseData(paramValues[0]);

var b1_name = paramValues[1].Name;

var b1 = new Value(paramValues[1].GetValue()).GetDenseData(paramValues[1]);

Console.WriteLine($" ");

Console.WriteLine($"Training process finished with the following regression parameters:");

Console.WriteLine($"b={b0[0][0]}, w={b1[0][0]}");

Console.WriteLine($" ");

}

}

}

可以看出,在200次迭代中,回归参数获得了我们几乎预期的值(As can be seen, in just 200 iterations, regression parameters got the values we almost expected)

和(, and)

.由于训练过程不同于经典回归参数确定,因此我们无法获得确切的值.为了估计回归参数,神经网络使用称为随机梯度衰减SGD的迭代方法.另一方面,经典回归通过最小化最小平方误差来使用回归分析程序,并在未知数为零的情况下求解系统方程.(. Since the training process is different than classic regression parameter determination, we cannot get exact values. In order to estimate regression parameters, the neural network uses iteration methods called Stochastic Gradient Decadent, SGD. On the other hand, classic regression uses regression analysis procedures by minimizing the least square error, and solves system equations where unknowns are)

b 和(and) w .(.)

一旦实现了上面所有代码,就可以通过按F5键启动LR演示.应该显示类似的输出窗口:(Once we implement all the code above, we can start LR demo by pressing F5. Similar output window should be shown:)

希望此博文可以提供足够的信息来开始使用CNTK C#和机器学习.可以下载此博客文章的源代码(Hope this blog post can provide enough information to start with CNTK C# and Machine Learning. Source code for this blog post can be downloaded) 这里(here) .(.)

许可

本文以及所有相关的源代码和文件均已获得The Code Project Open License (CPOL)的许可。

C# Visual-Studio machine-learning GPU 新闻 翻译