[译]用于C#中手写数字识别的神经网络

By robot-v1.0

本文链接 https://www.kyfws.com/ai/neural-network-for-recognition-of-handwritten-di-2-zh/

版权声明 本博客所有文章除特别声明外,均采用 BY-NC-SA 许可协议。转载请注明出处!

- 15 分钟阅读 - 7339 个词 阅读量 0用于C#中手写数字识别的神经网络(译文)

原文地址:https://www.codeproject.com/Articles/143059/Neural-Network-for-Recognition-of-Handwritten-Di-2

原文作者:Vietdungiitb

译文由本站 robot-v1.0 翻译

前言

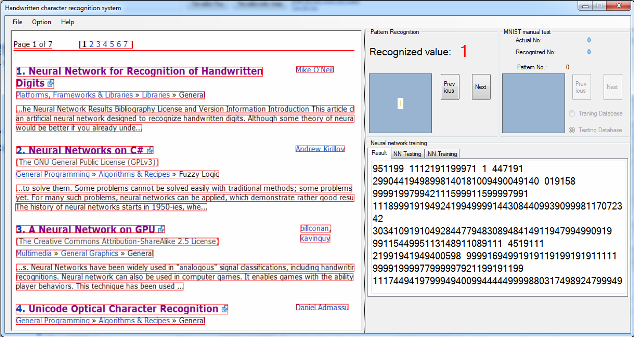

This article is an example of an artificial neural network designed to recognize handwritten digits.

本文是一个旨在识别手写数字的人工神经网络的示例.

介绍(Introduction)

本文是基于该出色文章的旨在识别手写数字的人工神经网络的另一个示例(This article is another example of an artificial neural network designed to recognize handwritten digits based on the brilliant article) 手写数字识别的神经网络(Neural Network for Recognition of Handwritten Digits) 通过(by) 迈克`奥尼尔(Mike O’Neill) .尽管在过去几年中已经提出了许多系统和分类算法,但是手写识别一直是模式识别中的一项艰巨任务. Mike O’Neill的程序对于想要研究神经元网络以进行模式识别的程序员,尤其是卷积神经网络的程序员来说,是一个很好的演示.该程序是用MFC/C ++模型编写的,对于不熟悉它的人来说有点困难.因此,我决定通过一些实验用C#重写它.我的程序取得了一些效果,几乎可以达到原始效果,但是仍然没有充分优化(收敛速度,错误率等).仅仅是完成工作并帮助理解网络的初始代码,因此它确实很混乱,需要重新构造.我一直在尝试将其重建为一个库,这样它会很灵活并且很容易通过INI文件更改参数.希望有一天我能得到预期的结果.(. Although many systems and classification algorithms have been proposed in the past years, handwriting recognition has always been a challenging task in pattern recognition. Mike O’Neill’s program is an excellent demo to programmers who want to study on neuron network for pattern recognition in general, and especially on convolution neural network. The program has been written in MFC/C++ model, which is a little bit difficult to someone who is not familiar with it. So, I decided to rewrite it in C# with some of my experiments. My program has achieved some results nearly reaching to that of the original, but it is still not optimized enough (convergence speed, error rate, etc.). It is just initial code which simply gets the job done and helps to understand the network, so it is really confusing and needs to be reconstructed. I have been trying to rebuild it as a library so it would be flexible and is easy to change parameters through an INI file. Hopefully, I can get the expected result someday.)

字符检测(Character Detection)

模式检测或字符候选检测是我在程序中必须面对的最重要的问题之一.实际上,我不仅要简单地用另一种语言重写Mike的程序,而且我还想识别文档图片中的字符.我在Internet上发现了一些研究,提出了非常好的对象检测算法,但是对于像我这样的业余项目来说,它们太复杂了.我在教女儿绘画时发现了一个小算法,解决了我的问题.当然,它仍然有局限性,但在第一次测试中就超出了我的期望.通常,候选字符检测分为行检测,单词检测和字符检测,算法分别不同.我的方法有点不同.检测使用与功能相同的算法:(Pattern detection or character candidate detection is one of the most importance problems I had to face in my program. In fact, I did not only want to simply rewrite Mike’s program in another language but I also wanted to recognize characters in a document picture. There are some researches that have proposed very good algorithms for object detection that I found in the Internet, but they are too complicated for a free-time project like my own. A small algorithm I found when teaching my daughter drawing solved my problem. Of course, it still has limitations, but it exceeded my expectations in the first test. In the normal, character candidate detection is divided to row detection, word detection and character detection with separate and different algorithms. My approach is little bit different. Detections used same algorithm with functions:)

public static Rectangle GetPatternRectangeBoundary

(Bitmap original,int colorIndex, int hStep, int vStep, bool bTopStart)

和:(and:)

public static List<Rectangle> PatternRectangeBoundaryList

(Bitmap original, int colorIndex, int hStep, int vStep,

bool bTopStart,int widthMin,int heightMin)

可以通过简单的更改参数来检测行,单词或字符:(Row, word or character can be detected by simple changing parameters:) hStep (水平步骤)和((horizon step) and) vStep (垂直步骤).也可以通过更改上下边界或从左到右检测矩形边界((vertical step). Rectangle boundaries also can be detected from top to bottom or left to right by changing) bTopStart 至(to) true 要么(or) false .矩形可以通过(. Rectangle can be limited by) widthMin d.我的算法的最大优点是:它可以检测不在同一行中的单词或字符组.(and d. The biggest advantage of my algorithm is: it can detect words or character groups which do not lie in a same row.)

可以通过以下功能获得候选字符识别:(The character candidate recognition can be obtained by function as follows:)

可以通过以下功能获得候选字符识别:(The character candidate recognition can be obtained by function as follows:)

public void PatternRecognitionThread(Bitmap bitmap)

{

_originalBitmap = bitmap;

if (_rowList == null)

{

_rowList = AForge.Imaging.Image.PatternRectangeBoundaryList

(_originalBitmap,255, 30, 1, true, 5, 5);

_irowIndex = 0;

}

foreach(Rectangle rowRect in _rowList)

{

_currentRow = AForge.Imaging.ImageResize.ImageCrop

(_originalBitmap, rowRect);

if (_iwordIndex == 0)

{

_currentWordsList = AForge.Imaging.Image.PatternRectangeBoundaryList

(_currentRow, 255, 20, 10, false, 5, 5);

}

foreach (Rectangle wordRect in _currentWordsList)

{

_currentWord = AForge.Imaging.ImageResize.ImageCrop

(_currentRow, wordRect);

_iwordIndex++;

if (_icharIndex == 0)

{

_currentCharsList =

AForge.Imaging.Image.PatternRectangeBoundaryList

(_currentWord, 255, 1, 1, false, 5, 5);

}

foreach (Rectangle charRect in _currentCharsList)

{

_currentChar = AForge.Imaging.ImageResize.ImageCrop

(_currentWord, charRect);

_icharIndex++;

Bitmap bmptemp = AForge.Imaging.ImageResize.FixedSize

(_currentChar, 21, 21);

bmptemp = AForge.Imaging.Image.CreateColorPad

(bmptemp,Color.White, 4, 4);

bmptemp = AForge.Imaging.Image.CreateIndexedGrayScaleBitmap

(bmptemp);

byte[] graybytes = AForge.Imaging.Image.GrayscaletoBytes(bmptemp);

PatternRecognitionThread(graybytes);

m_bitmaps.Add(bmptemp);

}

string s = " \n";

_form.Invoke(_form._DelegateAddObject, new Object[] { 1, s });

If(_icharIndex ==_currentCharsList.Count)

{

_icharIndex =0;

}

}

If(_iwordIndex==__currentWordsList.Count)

{

_iwordIndex=0;

}

}

}

字符识别(Character Recognition)

原始程序中的卷积神经网络(CNN)本质上是一个具有五层的CNN,包括输入层.卷积架构的细节已由(*The Convolution Neural Network (CNN) in the original program is essentially a CNN with five layers, including the input layer. The detail of the convolution architecture has been described by*) Mike和Simard博士在他们的文章中:(Mike and by Dr. Simard in their article:) “Best Practices for Convolutional Neural Networks Applied to Visual Document Analysis”)“卷积神经网络应用于可视文档分析的最佳实践”( .该卷积网络的一般策略是提取较高分辨率的简单特征,然后将其转换为较粗分辨率的更复杂特征.产生较粗糙分辨率的最简单方法是将图层二次采样2倍.这反过来又是卷积核大小的线索.内核的宽度选择为以一个单位(奇数大小)为中心,具有足够的重叠度而不会丢失信息(3太小,只有一个单位重叠度),但又没有冗余计算(7为太大(5个单位或重叠率超过70%).因此,在该网络中选择大小为5的卷积核.填充输入(使其增大以使要素单元位于边界中心)并不能显着提高性能.在没有填充的情况下,二次采样为2,内核大小为5,每个卷积层将特征大小从n减少到(n-3)/2.由于初始MNIST输入大小为28x28,因此在2层卷积后生成整数大小的最接近值是29x29.经过2层卷积后,5x5的特征尺寸对于第三层卷积来说太小了. Simard博士还强调,如果第一层具有少于五个不同的功能,则会降低性能.而使用5个以上并没有改善(Mike使用了6个功能).类似地,在第二层上,少于50个功能会降低性能,而更多(100个功能)并不能改善性能.神经网络的摘要如下:(. The general strategy of this convolutional network is to extract simple features at a higher resolution, and then convert them into more complex features at a coarser resolution. The simplest way to generate a coarser resolution is to sub-sample a layer by a factor of 2. This, in turn, is a clue to the convolution’s kernel’s size. The width of the kernel is chosen to be centered on a unit (odd size), to have sufficient overlap to not lose information (3 would be too small with only one unit overlap), but yet to not have redundant computation (7 would be too large, with 5 units or over 70% overlap). Therefore, the convolution kernel of size 5 is chosen in this network. Padding the input (making it larger so that there are feature units centered on the border) did not improve performance significantly. With no padding, a subsampling of 2, and a kernel size of 5, each convolution layer reduces the feature size from n to (n-3)/2. Since the initial MNIST input size is 28x28, the nearest value which generates an integer size after 2 layers of convolution is 29x29. After 2 layers of convolution, the feature size of 5x5 is too small for a third layer of convolution. Dr. Simard also emphasized that if the first layer has fewer than five different features, it decreased performance; while using more than 5 did not improve it (Mike used 6 features). Similarly, on the second layer, fewer than 50 features decreased performance while more (100 features) did not improve it. A summary of the neural network is as follows:)

第0层(Layer #0):是手写字符在屏幕上的灰度图像(: is the gray scale image of the handwritten character in the) MNIST 数据库填充为29x29像素.输入层中有29x29 =841个神经元.(database which is padded to 29x29 pixel. There are 29x29= 841 neurons in the input layer.)

第1层(Layer #1):是具有六(6)个特征图的卷积层.从第1层到上一层,共有13x13x6 =1014个神经元,(5x5 + 1)x6 =156个权重,以及1014x26 =26364个连接.(: is a convolutional layer with six (6) feature maps. There are 13x13x6 = 1014 neurons, (5x5+1)x6 = 156 weights, and 1014x26 = 26364 connections from layer #1 to the previous layer.)

第2层(Layer #2):是具有五十(50)个特征图的卷积层.从第2层到上一层有5x5x50 =1250个神经元,(5x5 + 1)x6x50 =7800个权重,以及1250x(5x5x6 + 1)=188750个连接.(: is a convolutional layer with fifty (50) feature maps. There are 5x5x50 = 1250 neurons, (5x5+1)x6x50 = 7800 weights, and 1250x(5x5x6+1)=188750 connections from layer #2 to the previous layer.)

(Mike的文章中没有32500个连接).((Not 32500 connections in Mike’s article).)

第3层(Layer #3):是一个具有100个单位的完全连接层.有100个神经元,100x(1250 + 1)=125100个权重和100x1251 =125100个连接.(: is a fully-connected layer with 100 units. There are 100 neurons, 100x(1250+1) = 125100 weights, and 100x1251 = 125100 connections.)

第4层(Layer #4):是最后一个,有10个神经元,10x(100 + 1)=1010权重和10x101 =1010连接.(: is the final, there are 10 neurons, 10x(100+1) = 1010 weights, and 10x101 = 1010 connections.)

反向传播(Back Propagation)

反向传播是更新每一层权重变化的过程,该过程从最后一层开始,然后向后移动穿过各层,直到到达第一层.(Back propagation is the process that updates the change in the weights for each layer, which starts with the last layer and moves backwards through the layers until the first layer is reached.)

在标准反向传播中,每个权重根据以下公式更新:(In standard back propagation, each weight is updated according to the following formula:)

(1)((1))

(1)((1))

哪里(Where)**eta(eta)**是"学习率",通常为小数,例如0.0005,在训练过程中逐渐降低.但是,由于收敛速度较慢,因此在原始程序中不需要使用标准反向传播.取而代之的是,采用了LeCun博士在他的文章" Efficient BackProp"中提出的称为"随机对角Levenberg-Marquardt方法"的二阶技术,尽管Mike表示它与标准反向传播并无二致.理论应该帮助像我这样的新生更容易理解代码.(is the “learning rate”, typically a small number like 0.0005 that is gradually decreased during training. However, standard back propagation does not need to be used in the original program because of slow convergence. Instead, the second order technique called “stochastic diagonal Levenberg-Marquardt method”, which was proposed by Dr. LeCun in his article “Efficient BackProp”, has been applied. Although Mike said that it is not dissimilar to standard back propagation, a little theory should help freshmen like me to easier understand the code.)

用Levenberg-Marquardt方法,(In Levenberg-Marquardt method,) rw 计算如下:(is calculated as follows:)

假设成本函数平方:(Assuming a squared cost function:)

那么渐变是:(Then the Gradient is:)

那么渐变是:(Then the Gradient is:)

粗麻布如下:(And the Hessian follows as:)

粗麻布如下:(And the Hessian follows as:)

Hessian的简化近似是Jacobian的平方,它是一个正半定尺寸的矩阵:N xO.(A simplifying approximation of the Hessian is square of the Jacobian, which is a positive semi-definite matrix of dimension: N x O.)

在神经网络中用于计算对角Hessian的反向传播程序是众所周知的.假设网络中的每一层都具有以下功能形式:(Back propagation procedures for computing the diagonal Hessian in neural networks are well known. Assuming that each layer in the network has the functional form:)

使用Gauss-Newton逼近(除去包含¦’(y)的项),我们得到:(Using Gaus-Neuton approximation (drop the term that contains ¦’’(y)), we obtain:)

和:(and:)

随机对角Levenberg-Marquardt方法(A Stochastic Diagonal Levenberg-Marquardt Method)

实际上,使用完整的Hessian信息(Levenberg-Marquardt,Gaus-Newton等)的技术仅适用于以批处理模式而非随机模式训练的非常小的网络.为了获得随机版本的(In fact, techniques using full Hessian information (Levenberg-Marquardt, Gaus-Newton, etc.) can only apply to very small networks trained in batch mode, not in stochastic mode. In order to obtain a stochastic version of the)莱文贝格(Levenberg)-Marquardt算法(-Marquardt algorithm)LeCun博士提出了通过对每个参数进行二阶导数的运行估计来计算对角线Hessian的想法.瞬时二阶导数可以通过反向传播获得,如公式(7\8\9)所示.一旦有了这些运行的估计,就可以使用它们来计算每个参数的单独学习率:(, Dr. LeCun has proposed the idea to compute the diagonal Hessian through a running estimate of the second derivative with respect to each parameter. The instantaneous second derivative can be obtained via back propagation as shown in the formulas (7, 8, 9). As soon as we have those running estimates, we can use them to compute individual learning rates for each parameter:)

其中e是全球学习率,并且(Where e is the global learning rate, and) 是相对于h的对角二阶导数的运行估计(is a running estimate of the diagonal second derivative with respect to h)ki(ki). m是防止h的参数(. m is a parameter to prevent h)ki(ki)在二阶导数较小的情况下(即当优化在误差函数的平坦部分中移动时)会爆炸.可以在训练集的子集中(训练集的500个随机模式/60000个模式)计算二阶导数.由于它们的变化非常缓慢,因此仅需要每隔几个纪元重新估算一次即可.在原始程序中,每个时期都会重新估计对角线Hessian.(from blowing up in case the second derivative is small, i.e., when the optimization moves in flat parts of the error function. The second derivatives can be computed in a subset of the training set (500 randomized patterns / 60000 patterns of the training set). Since they change very slowly, they only need to be re-estimated every few epochs. In the original program, the diagonal Hessian is re-estimated every epoch.)

是相对于h的对角二阶导数的运行估计(is a running estimate of the diagonal second derivative with respect to h)ki(ki). m是防止h的参数(. m is a parameter to prevent h)ki(ki)在二阶导数较小的情况下(即当优化在误差函数的平坦部分中移动时)会爆炸.可以在训练集的子集中(训练集的500个随机模式/60000个模式)计算二阶导数.由于它们的变化非常缓慢,因此仅需要每隔几个纪元重新估算一次即可.在原始程序中,每个时期都会重新估计对角线Hessian.(from blowing up in case the second derivative is small, i.e., when the optimization moves in flat parts of the error function. The second derivatives can be computed in a subset of the training set (500 randomized patterns / 60000 patterns of the training set). Since they change very slowly, they only need to be re-estimated every few epochs. In the original program, the diagonal Hessian is re-estimated every epoch.)

这是C#中的二阶导数计算函数:(Here is the second derivative computation function in C#:)

public void BackpropagateSecondDerivatives(DErrorsList d2Err_wrt_dXn /* in */,

DErrorsList d2Err_wrt_dXnm1 /* out */)

{

// nomenclature (repeated from NeuralNetwork class)

// NOTE: even though we are addressing SECOND

// derivatives ( and not first derivatives),

// we use nearly the same notation as if there

// were first derivatives, since otherwise the

// ASCII look would be confusing. We add one "2"

// but not two "2's", such as "d2Err_wrt_dXn",

// to give a gentle emphasis that we are using second derivatives

//

// Err is output error of the entire neural net

// Xn is the output vector on the n-th layer

// Xnm1 is the output vector of the previous layer

// Wn is the vector of weights of the n-th layer

// Yn is the activation value of the n-th layer,

// i.e., the weighted sum of inputs BEFORE the squashing function is applied

// F is the squashing function: Xn = F(Yn)

// F' is the derivative of the squashing function

// Conveniently, for F = tanh, then F'(Yn) = 1 - Xn^2, i.e.,

// the derivative can be calculated from the output,

// without knowledge of the input

int ii, jj;

uint kk;

int nIndex;

double output;

double dTemp;

var d2Err_wrt_dYn = new DErrorsList(m_Neurons.Count);

//

// std::vector< double > d2Err_wrt_dWn( m_Weights.size(), 0.0 );

// important to initialize to zero

//////////////////////////////////////////////////

//

///// DESIGN TRADEOFF: REVIEW !!

//

// Note that the reasoning of this comment is identical

// to that in the NNLayer::Backpropagate()

// function, from which the instant

// BackpropagateSecondDerivatives() function is derived from

//

// We would prefer (for ease of coding) to use

// STL vector for the array "d2Err_wrt_dWn", which is the

// second differential of the current pattern's error

// wrt weights in the layer. However, for layers with

// many weights, such as fully-connected layers,

// there are also many weights. The STL vector

// class's allocator is remarkably stupid when allocating

// large memory chunks, and causes a remarkable

// number of page faults, with a consequent

// slowing of the application's overall execution time.

// To fix this, I tried using a plain-old C array,

// by new'ing the needed space from the heap, and

// delete[]'ing it at the end of the function.

// However, this caused the same number of page-fault

// errors, and did not improve performance.

// So I tried a plain-old C array allocated on the

// stack (i.e., not the heap). Of course I could not

// write a statement like

// double d2Err_wrt_dWn[ m_Weights.size() ];

// since the compiler insists upon a compile-time

// known constant value for the size of the array.

// To avoid this requirement, I used the _alloca function,

// to allocate memory on the stack.

// The downside of this is excessive stack usage,

// and there might be stack overflow probelms. That's why

// this comment is labeled "REVIEW"

double[] d2Err_wrt_dWn = new double[m_Weights.Count];

for (ii = 0; ii < m_Weights.Count; ++ii)

{

d2Err_wrt_dWn[ii] = 0.0;

}

// calculate d2Err_wrt_dYn = ( F'(Yn) )^2 *

// dErr_wrt_Xn (where dErr_wrt_Xn is actually a second derivative )

for (ii = 0; ii < m_Neurons.Count; ++ii)

{

output = m_Neurons[ii].output;

dTemp = m_sigmoid.DSIGMOID(output);

d2Err_wrt_dYn.Add(d2Err_wrt_dXn[ii] * dTemp * dTemp);

}

// calculate d2Err_wrt_Wn = ( Xnm1 )^2 * d2Err_wrt_Yn

// (where dE2rr_wrt_Yn is actually a second derivative)

// For each neuron in this layer, go through the list

// of connections from the prior layer, and

// update the differential for the corresponding weight

ii = 0;

foreach (NNNeuron nit in m_Neurons)

{

foreach (NNConnection cit in nit.m_Connections)

{

try

{

kk = (uint)cit.NeuronIndex;

if (kk == 0xffffffff)

{

output = 1.0;

// this is the bias connection; implied neuron output of "1"

}

else

{

output = m_pPrevLayer.m_Neurons[(int)kk].output;

}

// ASSERT( (*cit).WeightIndex < d2Err_wrt_dWn.size() );

// since after changing d2Err_wrt_dWn to a C-style array,

// the size() function this won't work

d2Err_wrt_dWn[cit.WeightIndex] = d2Err_wrt_dYn[ii] * output * output;

}

catch (Exception ex)

{

}

}

ii++;

}

// calculate d2Err_wrt_Xnm1 = ( Wn )^2 * d2Err_wrt_dYn

// (where d2Err_wrt_dYn is a second derivative not a first).

// d2Err_wrt_Xnm1 is needed as the input value of

// d2Err_wrt_Xn for backpropagation of second derivatives

// for the next (i.e., previous spatially) layer

// For each neuron in this layer

ii = 0;

foreach (NNNeuron nit in m_Neurons)

{

foreach (NNConnection cit in nit.m_Connections)

{

try

{

kk = cit.NeuronIndex;

if (kk != 0xffffffff)

{

// we exclude ULONG_MAX, which signifies the phantom bias neuron with

// constant output of "1", since we cannot train the bias neuron

nIndex = (int)kk;

dTemp = m_Weights[(int)cit.WeightIndex].value;

d2Err_wrt_dXnm1[nIndex] += d2Err_wrt_dYn[ii] * dTemp * dTemp;

}

}

catch (Exception ex)

{

return;

}

}

ii++; // ii tracks the neuron iterator

}

double oldValue, newValue;

// finally, update the diagonal Hessians

// for the weights of this layer neuron using dErr_wrt_dW.

// By design, this function (and its iteration

// over many (approx 500 patterns) is called while a

// single thread has locked the neural network,

// so there is no possibility that another

// thread might change the value of the Hessian.

// Nevertheless, since it's easy to do, we

// use an atomic compare-and-exchange operation,

// which means that another thread might be in

// the process of backpropagation of second derivatives

// and the Hessians might have shifted slightly

for (jj = 0; jj < m_Weights.Count; ++jj)

{

oldValue = m_Weights[jj].diagHessian;

newValue = oldValue + d2Err_wrt_dWn[jj];

m_Weights[jj].diagHessian = newValue;

}

}

//////////////////////////////////////////////////////////////////

训练与实验(Training and Experiments)

尽管MFC/C ++和C#之间不兼容,但是我的程序与原始程序相似.使用MNIST数据库,网络在60,000种训练集模式中执行了291次错误识别.这意味着错误率仅为0.485%.但是,它在10,000个测试集模式中执行了136个错误识别,错误率是1.36%.结果不如基准测试,但足以让我用自己的手写字符集进行实验.首先,将输入图片从上到下划分为字符组,然后,在从神经网络识别之前,将从左到右检测每个组中的字符,并将其大小调整为29x29像素.该程序总体上满足了我的要求,可以正确识别我自己的手写数字.检测功能已添加到AForge.Net的图像处理库中,以供将来使用.但是,由于仅在空闲时间对其进行了编程,因此我确定它具有需要修复的大量错误.反向传播时间就是一个例子.训练集失真的情况下,每个纪元通常需要3800秒左右的时间,反之则仅需2400秒. (我的计算机是Intel Pentium双核E6500.)与Mike的程序等相比,它相当慢.我也希望拥有一个更好的手写字符数据库,或者与某人合作以继续我的实验并为一个实际应用开发我的算法.(Although there is an incompatibility between MFC/C++ and C#, my program is similar to the original. Using the MNIST database, the network performed 291 mis-recognitions out of 60,000 patterns of the training set. It means the error rate is only 0.485%. However, it performed 136 mis-recognitions out of 10,000 patterns of the testing set, and the error rate is 1.36 %. The result was not as good as the benchmark, but it was enough for me to do experiments with my own handwritten character set. Firstly, the input picture was divided into character groups from top to bottom, after that, characters in each group would be detected from left to right and resized to 29x29 pixels before recognized by the neural network. The program satisfied my requirements in general, my own hand written digits could be recognized in good order. Detection functions have been added to AForge.Net’s Image processing library for future works. However, because it has only been programmed at my free times only, so I am sure that it has huge bugs that need to be fixed. Back propagation time is an example. It usually takes around 3800 seconds per epoch with a distorted training set, but only 2400 seconds vice versa. (My computer is an Intel Pentium dual core E6500.) It is rather slow when compared to Mike’s program etc. I also do hope to have a better handwritten character database or cooperate with someone to continue my experiments and developing my algorithms for a real application.)

参考书目(Bibliography)

-

手写数字识别的神经网络(Neural Network for Recognition of Handwritten Digits) 通过(by) 迈克`奥尼尔(Mike O’Neill)

-

LeCun博士网站的以下部分:(Section of Dr. LeCun’s website on*) “Learning and Visual Perception”*)“学习和视觉感知”(

-

修改后的NIST(” MNIST")数据库(总计11,594 KB)(Modified NIST (“MNIST”) database (11,594 KB total))

-

Y. LeCun,L.Bottou,Y.Bengio和P. Haffner,(Y. LeCun, L. Bottou, Y. Bengio, and P. Haffner,) “Gradient-Based Learning Applied to Document Recognition”)“基于梯度的学习应用于文档识别”( ,IEEE会议论文集,第一卷. 86号1998年11月,第11页,第2278-2324页.[46页].(, Proceedings of the IEEE, vol. 86, no. 11, pp. 2278-2324, Nov. 1998. [46 pages].)

-

Y. LeCun,L.Bottou,G.Orr和K. Muller,(Y. LeCun, L. Bottou, G. Orr, and K. Muller,) “Efficient BackProp”)“高效的BackProp”( ,见<神经网络:交易技巧>(G. Orr和Muller K.编辑),1998年.[44页](, in Neural Networks: Tricks of the trade, (G. Orr and Muller K., eds.), 1998. [44 pages])

-

帕特里斯

西蒙德(Patrice Y. Simard),戴夫斯坦克拉斯(Dave Steinkraus),约翰`普拉特(John Platt),(Patrice Y. Simard, Dave Steinkraus, John Platt,) “Best Practices for Convolutional Neural Networks Applied to Visual Document Analysis,")“卷积神经网络应用于可视文档分析的最佳实践”,( 国际文档分析和识别会议(ICDAR),IEEE计算机协会,洛斯阿拉米托斯,第958-962页,2003年.(International Conference on Document Analysis and Recognition (ICDAR), IEEE Computer Society, Los Alamitos, pp. 958-962, 2003.) -

Fabien Lauer,Ching Y. Suen和Gerard Bloch,(Fabien Lauer, Ching Y. Suen and Gerard Bloch,) “A Trainable Feature Extractor for Handwritten Digit Recognition”)“用于手写数字识别的可训练特征提取器”( ,爱思唯尔科学,2006年2月(, Elsevier Science, February 2006)

-

我的程序中使用了CodeProject.com上的一些其他项目:(Some additional projects on CodeProject.com that have been used in my program:)

- 在.NET中实现MFC样式的序列化-第1部分(Implementing MFC-Style Serialization in .NET - Part 1) 通过(by) 罗伯特`皮滕格(Robert Pittenger)

- NET的GDI +调整照片图像的大小(Resizing a photographic image with GDI+ for .NET) 通过(by) 乔尔`诺贝克(Joel Neubeck)

- 使用C#的INI文件处理类(An INI file handling class using C#) 通过(by) BlaZiNiX(BLaZiNiX)

- C#中的高性能计时器(High-Performance Timer in C#) 通过(by) 丹尼尔`斯特里格(Daniel Strigl)

许可

本文以及所有相关的源代码和文件均已获得The Code Project Open License (CPOL)的许可。

C# .NET Dev 新闻 翻译