[译]CNTK模型并发演示

By robot-v1.0

本文链接 https://www.kyfws.com/ai/cntk-model-concurrency-demonstration-zh/

版权声明 本博客所有文章除特别声明外,均采用 BY-NC-SA 许可协议。转载请注明出处!

- 16 分钟阅读 - 7899 个词 阅读量 0CNTK模型并发演示(译文)

原文地址:https://www.codeproject.com/Articles/1259119/CNTK-Model-Concurrency-Demonstration

原文作者:asiwel

译文由本站 robot-v1.0 翻译

前言

How to Deploy Trained Models Concurrently

如何同时部署经过训练的模型

- 下载演示151.4 KB(Download demo - 151.4 KB) 访问第1部分:(Visit Part 1:) 数据可视化和贝塞尔曲线(Data Visualizations and Bezier-Curves)

访问第2部分:(Visit Part 2:) 贝塞尔曲线机器学习示范(Bezier Curve Machine Learning Demonstration)

访问第3部分:(Visit Part 3:) MS CNTK的Bezier曲线机器学习演示(Bezier Curve Machine Learning Demonstration with MS CNTK)

介绍(Introduction)

本文是该系列文章的第4部分.先前的文章研究了使用Bezier曲线对纵向数据进行建模并利用机器学习算法训练网络模型以识别此类曲线的轨迹中反映的模式和趋势的技术.但是,一旦您训练并验证了一个或多个合适的分类模型,便希望在生产运行中有效地部署这些模型.(This article is Part 4 in a series. Previous articles examined techniques for modelling longitudinal data with Bezier curves and utilizing machine learning algorithms to train network models to recognize patterns and trends reflected in the trajectories of such curves. However, once you have trained and validated one or more suitable classification models, you want to deploy those models efficiently in production runs.)

通常(至少以我有限的经验),将模型应用于新的特征数据记录并返回结果的分类步骤是一个非常快速,非常"短暂"的过程.但是,同样通常在生产中(正在检查数千个案例),该过程可能会深深地嵌入到耗时得多的"长时间运行"的数据处理步骤中.这可能涉及各种操作,以将"原始数据"收集,建模和定型为可供分类的记录.然后,它可能会具有其他步骤(将分类结果简单地包含为另一个变量),以进一步塑造数据以进行描述性或预测性分析和/或可视化等.(Typically (at least in my limited experience), the classification step, where the model is applied to a new feature data record and a result is returned, is a very fast, quite “short-running” process. However, also typically, in production (where 1000s of cases are being examined), that process may be rather deeply embedded in a much more time-consuming “long-running” data-processing step. This may involve all sorts of manipulations to collect, model, and shape “raw data” into records ready to classify. It may then have additional steps (which simply include the classification result as one more variable) to shape the data further for descriptive or predictive analytics and/or visualization, etc.)

在这种情况下,自然会考虑并行处理.不幸的是,将模型应用于数据的函数通常不是线程安全的.例如,用于ALGLIB神经网络和决策林的对象以及为MS CNTK神经网络提供的对象都不是线程安全的.本文演示了一种技术,该技术可以安全地部署经过训练的模型,并同时嵌入在运行于服务器内部的线程安全进程中.(When that is the case, it is natural to think about parallel processing. Unfortunately, often the functions that apply the model to the data are not thread-safe. As examples, those for ALGLIB neural networks and decision forests as well as those provided for MS CNTK neural networks are not thread-safe. This article demonstrates one technique for deploying trained models safely and concurrently embedded in an otherwise thread-safe process running inside a) Parallel ForEach 在C#中循环.(loop in C#.)

背景(Background)

对于上下文,在以前的文章中,我们研究了使用Bezier曲线建模的6至12年级学生随时间的学习成绩.我们演示了机器学习分类模型,可以对它们进行训练,以识别各种模式,例如纵向轨迹可以识别表现良好或有危险的学生.(For context, in previous articles, we looked at student academic performance over time from grades 6 through 12, modelled using Bezier curves. We demonstrated machine learning classification models that could be trained to recognize various patterns, such as longitudinal trajectories that identify students who appear to be doing well or likely to be at-risk.)

在这里,在这个独立的"(Here, in this standalone “) ConcurrencyDemo “控制台应用程序项目,我们将使用上一个”(” console app project, we will use the same data set from the previous “) BezierCurveMachineLearningDemoCNTK 在第3部分中介绍的项目.该项目演示了各种方法来训练不同类型的网络,并允许将其保存以供以后在生产运行中使用.该项目包括上一个项目生成的3个经过训练的模型(CNTK神经网络,ALGLIB神经网络和决策森林网络).(” project that was presented in Part 3. That project demonstrated various ways to train different types of networks and allowed them to be saved for later use in production runs. This project includes 3 trained models (a CNTK neural network and ALGLIB neural network and decision forest networks) that were generated by the previous project.)

当这个(When this) ConcurrencyDemo 在Visual Studio中下载并打开项目后,所需的CNTK 2.6 Nuget程序包应自动还原并作为参考.然后,配置为用于Debug和Release的x64构建,使用CNTK模型进行的并发演示应该可以很好地进行.但是,要测试ALGLIB模型,您需要成功下载并构建免费版本的(project is downloaded and opened in Visual Studio, the required CNTK 2.6 Nuget package should be restored automatically and included as a reference. Then, configured as x64 builds for Debug and Release, the demonstration of concurrency using CNTK models should run well. However, to test ALGLIB models, you will need to have downloaded and built successfully the free edition of)ALGLIB314.dll(ALGLIB314.dll).第2部分中介绍了详细的操作步骤.将其作为参考添加并且未注释演示代码的相应部分时,您也可以测试这些模型.(. A detailed walk-though to do that was presented in Part 2. When this is added as a reference and the appropriate section of the demo code is un-commented, you will be able to test those models as well.)

我认为可以单独阅读和理解本文.回顾第1至第3部分中的叙述将为更好地理解奠定基础.经过CNTK训练的模型不是线程安全的,这是一个很好的参考(I think this article can be read and understood alone. Reviewing the narrative in Parts 1 through 3 would set the stage for understanding better. CNTK trained models are NOT thread safe and here is a good reference)**:(:) Windows上的模型评估(Model Evaluation on Windows) **.并行操作和并发并不是简单的话题,我当然也不是专家.这是Microsoft提供的很好的复习参考:(. Parallel operations and concurrency are NOT simple topics and I admittedly am not an expert. Here is a very good refresher reference for that from Microsoft:) 并行编程的模式:通过.NET Framework 4理解和应用并行模式(Patterns for Parallel Programming: Understanding and Applying Parallel Patterns with the .NET Framework 4) .(.)

训练有素的模型部署(Trained Model Deployment)

本系列的第3部分提供了使用ALGLIB和CNTK进行训练,验证和保存各种分类模型的实验代码.假设您已完成此操作,并且已保存了三个经过训练的模型文件:ALGLIB神经网络,ALGLIB决策森林和CNTK神经网络.还要假设您有一个包含要素的新数据集(在这种情况下,从起点到终点的24个时间间隔相等的值的矢量代表贝塞尔曲线轨迹).每个案例将作为一个分类呈现给分类器(Part 3 of this series provided code to experiment with training, validating, and saving various classification models using ALGLIB and CNTK. Let’s assume you have done that and you have three saved trained model files: an ALGLIB neural net, an ALGLIB decision forest, and a CNTK neural net. Assume as well you have a new data set consisting of features (in this case, vectors of 24 equally time-spaced values from a starting point to an ending point representing Bezier curve trajectories). Each case will be presented to a classifier as a) double[] 数据数组.然后,分类器应返回分类概率列表以及最大(因此也是最有可能预测的)分类的索引.(data array. The classifier should then return a list of classification probabilities and the index of the largest (and therefore, most likely predicted) classification.)

至少有几种方法可以成功地同时运行经过训练的模型.但是,我发现了最好最简单的方法(遵循CodeProject贡献者的出色技巧,(There are at least several ways to run trained models successfully concurrently. However, the best and simplest method I have found (following an excellent tip from CodeProject contributor,) [巴鲁丁赫尔尼察(*Bahrudin Hrnjica*)](http://bhrnjica.net/) ,他当然非常了解CNTK)涉及到(*, who certainly knows CNTK well) involves the use of*) ThreadLocal` 类方法.这是该代码的一部分(class methods. Here is part of the code for the)**ClassifierModel.cs(ClassifierModel.cs)**本演示中使用的模块:(module used in this demonstration:)

using CNTK;

using System;

using System.Collections.Generic;

using System.IO;

using System.Linq;

using System.Threading;

namespace ConcurrencyDemo

{

public enum EstimationMethod { ALGLIB_NN = 1, ALGLIB_DF = 2, CNTK_NN = 3 };

public interface IClassifier

{

int PredictStatus(double[] data);

(int pstatus, List<double> prob) PredictProb(double[] data);

int GetThreadCount();

void ClearThreadLocalStorage();

void DisposeThreadLocalStorage();

}

/// <summary>

/// Choose Machine Learning Classification Method using EstimationMethod ENUM value

/// </summary>

public class MLModel : IClassifier

{

// Fields - some encapsulated

private IClassifier _IClassifierModel;

// Constructor

/// <summary>

/// Loads the trained network indicated by ENUM value from a file

/// </summary>

/// <param name="mychoice">an EstimationMethod EMUN value</param>

/// <param name="applicationPath">path to application folder</param>

public MLModel(EstimationMethod mychoice, string applicationPath)

{

switch (mychoice)

{

case EstimationMethod.ALGLIB_NN:

_IClassifierModel = new ALGLIB_NN_Model(Path.Combine

(applicationPath, "TrainedModels\\TrainedALGLIB_NN.txt"));

return;

case EstimationMethod.ALGLIB_DF:

_IClassifierModel = new ALGLIB_DF_Model(Path.Combine

(applicationPath, "TrainedModels\\TrainedALGLIB_DF.txt"));

return;

case EstimationMethod.CNTK_NN:

_IClassifierModel = new CNTK_NN_Model(Path.Combine

(applicationPath, "TrainedModels\\TrainedCNTK_NN.txt"));

return;

}

throw new Exception("No status estimation method was provided");

}

// Methods - Pass-through required by IClassifier interface

/// <summary>

/// Classify a Bezier History Curve by Status using this IClassifier model

/// </summary>

/// <returns> an integer classification label</returns>

public int PredictStatus(double[] array)

{

return _IClassifierModel.PredictStatus(array);

}

/// <summary> Classify a Bezier History Curve by Status and

/// Probability using this IClassifier model

/// </summary>

/// <param name="array">24 equi-spaced Bezier values from start to end</param>

public (int pstatus, List<double> prob) PredictProb(double[] array)

{

return _IClassifierModel.PredictProb(array);

}

public int GetThreadCount() { return _IClassifierModel.GetThreadCount(); }

public void ClearThreadLocalStorage() { _IClassifierModel.ClearThreadLocalStorage(); }

public void DisposeThreadLocalStorage() { _IClassifierModel.DisposeThreadLocalStorage(); }

}

public class CNTK_NN_Model : IClassifier

{

// FIELDS

private readonly CNTK.Function _clonedModelFunc; // this is the original model

private ThreadLocal<CNTK.Function> _cloneModel; // this is ThreadLocal clone

// CONSTRUCTORS

public CNTK_NN_Model(string networkPathName) // if network is in a file

{

if (File.Exists(networkPathName))

{

CNTK.Function CNTK_NN = Function.Load(networkPathName,

DeviceDescriptor.UseDefaultDevice(), ModelFormat.CNTKv2);

_clonedModelFunc = CNTK_NN; // Save original

_cloneModel = new ThreadLocal<CNTK.Function>(() =>

_clonedModelFunc.Clone(ParameterCloningMethod.Share),true);

}

else throw new FileNotFoundException("CNTK_NN Classifier file not found.");

}

public CNTK_NN_Model(Function CNTK_NN) // if network is in memory

{

_clonedModelFunc = CNTK_NN; // Save original

_cloneModel = new ThreadLocal<CNTK.Function>(() =>

_clonedModelFunc.Clone(ParameterCloningMethod.Share), true);

}

// METHODS

/// <summary>

/// Classify a Bezier Curve History by Status

/// </summary>

/// <param name="array">24 equi-spaced Bezier values from start to end</param>

/// <returns> an integer classification label</returns>

public int PredictStatus(double[] data)

{

(int pstatus, List<double> prob) = PredictProb(data);

return pstatus;

}

/// <summary> Classify a Bezier Curve History by Status and Probability Using CNTK

/// </summary>

/// <param name="array">24 equi-spaced Bezier values from start to end</param>

/// <returns>A tuple (int status, List<double> prob)</returns>

public (int pstatus, List<double> prob) PredictProb(double[] data)

{

CNTK.Function NN = _cloneModel.Value;

// extract features and label from the cloned model

Variable feature = NN.Arguments[0];

Variable label = NN.Outputs[0];

int inputDim = feature.Shape.TotalSize;

int numOutputClasses = label.Shape.TotalSize;

float[] xVal = new float[inputDim];

for (int i = 0; i < 24; i++) xVal[i] = (float)data[i];

Value xValues = Value.CreateBatch<float>(new int[] { feature.Shape[0] }, xVal,

DeviceDescriptor.UseDefaultDevice());

var inputDataMap = new Dictionary<Variable, Value>() { { feature, xValues } };

var outputDataMap = new Dictionary<Variable, Value>() { { label, null } };

NN.Evaluate(inputDataMap, outputDataMap, DeviceDescriptor.UseDefaultDevice());

var outputData = outputDataMap[label].GetDenseData<float>(label);

List<double> prob = new List<double>();

for (int i = 0; i < numOutputClasses; i++) prob.Add(outputData[0][i]);

int pstatus = prob.IndexOf(prob.Max()) + 1; // label classes should start at one

return (pstatus, prob);

}

/// <summary>

/// Returns the number of ThreadLocal values produced and consumed<para>

/// by the model instance running serially or currently</para>

/// </summary>

///

public int GetThreadCount() { return (_cloneModel.Values).Count(); }

/// <summary>

/// Dispose the old ThreadLocal instance and create a new one inside the model object

/// </summary>

public void ClearThreadLocalStorage()

{

_cloneModel.Dispose();

_cloneModel = new ThreadLocal<CNTK.Function>(() =>

_clonedModelFunc.Clone(ParameterCloningMethod.Share), true);

}

/// <summary>

/// When finished using the model instance, dispose the ThreadLocal instance first.<para>

/// Then the model object will be garbage-collected</para>

/// </summary>

public void DisposeThreadLocalStorage() { _cloneModel.Dispose(); }

}

很抱歉,代码块太长,但不难解释.假设我们知道训练后的模型磁盘文件的名称和完整路径.在此演示中,这些是:(Sorry for the long code block, but it is not difficult to explain. Assume we know the names of and full paths to the trained model disk files. In this demo, those are:)

applicationPath + "Trained Models\\TrainedALGLIB_NN.txt"

applicationPath + "Trained Models\\TrainedALGLIB_DF.txt"

applicationPath + "Trained Models\\TrainedCNTK_NN.txt"

然后,我们首先使用(Then we begin by selecting a model for the production run using the)** Enum EstimationMethod **, 像这样.然后,也许在其他一些数据处理步骤中,我们将模型应用于特征数据向量,如下所示:(*, like this. Then later on, maybe deep in some other data processing step, we will apply our model to a feature data vector, like this:*)

MLMODEL myModel = new MLMODEL(EstimationMethod.CNTK, myApplicationpath);

…

int pred = myModel.PredictStatus(someFeatureDataVector);

…

首先,我们定义一个(First, we define an) ICLASSIFIER 接口.此处说明了该界面,以允许用户添加多种类型的训练模型并在其中进行切换. (如果只关注一种类型的模型,则不需要.)在上面的代码中,仅示出了CNTK模型.接下来,我们有一个(interface. That interface is illustrated here to allow the user to add and switch between multiple types of trained models. (It would not be needed if only one type of model is of interest). In the code above, only the CNTK model is illustrated. Next, we have a) MLMODEL 类和构造函数,该模型加载选定的训练模型数据文件并创建一个新的(class and a constructor that loads the selected trained model data file and creates a new) ICLASSIFIER 包含该模型的对象.由于两者都继承自(object containing that model. Since both inherit from) ICLASSIFIER ,两者都必须实现几种方法:(, both must implement several methods:) PredictStatus() 和(and) PredictProb() **,(,)**加上其他一些与(plus a couple of other useful properties related to the) ThreadLocal 类.我们称(class. We call the) myModel 对象的方法,它们依次调用相同的方法(object’s methods and they in turn call the same methods in the) ICLASSIFIER 它定义的对象.在那里,将模型应用于数据,并将返回值沿链向上传递回调用过程.(object it defines. There, the model is applied to the data and the return values are passed back up the chain to the calling process.)

新的(The new) ICLASSIFIER 对象构造函数接收模型磁盘文件的路径,并将模型加载到此变量中:(object constructor receives the path to the model disk file and loads the model into this variable:)

private readonly CNTK.Function _clonedModelFunc; // this is the original model

然后创建自己的(It then creates its own) ThreadLocal<T> 对象在哪里(object where) `` 是经过训练的模型类型,如下所示:(is the trained model type, like this:)

private ThreadLocal<CNTK.Function> _cloneModel; // this is ThreadLocal clone

...

_cloneModel = new ThreadLocal<CNTK.Function>(() =>

clonedModelFunc.Clone(ParameterCloningMethod.Share), true);

然后,稍后在代码中的其他地方,在某个线程上运行时,(Then, later on somewhere else in the code, while running on some thread, the) myModel.PredictStatus(datavector) 要么(or) myModel.PredictProb(datavector) **方法被调用.发生的第一件事是该方法调用(method is called. The first thing that happens is that the method calls on the) ThreadLocal 对象以获取唯一的克隆模型"(object to obtain the unique cloned model “) Value “用于该特定线程并应用于数据记录,从而进行分类.(” to be used on that particular thread and applied to a data record, resulting in a classification.)

CNTK.Function NN = _cloneModel.Value; // Acquire the unique clone for this thread

...

... evaluate

...

return (pstatus, prob);

THREADLOCAL (THREADLOCAL)

关于最有趣的事情(The most interesting thing about the) MLMODEL 类和(class and the) ICLASSIFIER 它创建的对象是使用(object it creates is the use of a) ThreadLocal 对象以及它的各种属性和方法.这里又是一个问题:经过CNTK训练的模型和相关方法不是线程安全的. ALGLIB网络模型也是如此.补救措施是创建用于并行处理的克隆模型.(object and some assorted properties and methods for it. Here again is the issue: CNTK trained models and associated methods are NOT thread-safe. The same also is true for ALGLIB network models. The remedy is to create cloned models to be used for concurrent processing.)

如果您是串行运行(在应用程序的主线程上),则只需一个模型.如果您正在运行并行并发进程,则将需要多个克隆副本.至少,硬件提供的每个逻辑处理器都需要一个副本.例如,如果您有2个提供超线程的CPU,则您有4个逻辑处理器. (在一个(If you are running serially (on the application’s main thread), you need only one model. If you are running parallel concurrent processes, you will need multiple cloned copies. At the very minimum, you need one copy for each logical processor your hardware provides. For example, if you have 2 CPUs offering hyperthreading, then you have 4 logical processors. (In a) Parallel ForEach 例如,您可以使用PLINQ(loop, for instance, you can use PLINQ’s) WithDegreeOfParallelism 控制它的方法.)在很多时候,(method to control this.) Much of the time,) System.Threading 方法使用数据分区方案,并为每个逻辑处理器分配一个线程.但是,负载平衡等仍然可能经常导致多个线程在同一处理器上运行.(methods use data partitioning schemes and allocate a single thread to each logical processor. But still often, load balancing and so forth may result in several threads running on the same processor.)

可以说,创建这些克隆是一个耗时和内存的过程,而不是"即时"完成的事情.(Creating those clones is a time- and memory-consuming process and not something to be done “on-the-fly,” so to speak.) ThreadLocal<T> 为我们处理所有这一切,为每个新处理器线程创建并存储一个新克隆,并将该特定克隆提供给该特定线程上发生的事件.这样做的地方是在(handles all this for us, creating and storing one new clone for each new processor thread and supplying that particular clone to events occurring on that particular thread. The place to do that is in the) ICLASSIFIER 网络对象构造函数.(network object constructor.)

当模型完全在主线程上运行时,仅存储一个克隆值,并且仅使用一个副本.但是,如果您有多个逻辑处理器并同时运行分类方法,则将有多个值存储在(When the model is running entirely on the main thread, only one clone value is stored and only one copy is ever used. But if you have multiple logical processors and are running a classification method concurrently, you will have multiple values stored in) ThreadLocal 存储.提供了几种有用的方法来对此进行检查.(storage. Several useful methods are provided to check up on that.)

GetThreadCount() 获取已存储的副本数.清除并重新创建(gets the number of copies that have been stored. It is a good idea to clear and recreate the) ThreadLocal 在可能涉及程序分类的每个并行代码块之前的对象.(object prior to each parallel code block that might involve classification in your program.) ClearThreadLocalStorage() 做到这一点.最后,在退出应用程序之前,应始终处置该存储.(does that. Finally, you should always dispose of that storage before exiting your application.) DisposeThreadLocalStorage() 做到这一点.(does that.)

所以(So the) CNTK_NN 上面代码中的对象始终知道每个数据分区和每个逻辑处理器要使用哪个克隆的模型元素,(object in the code above always knows which cloned model element to use for each data partition and each logical processor,) ThreadID .这允许(. This allows the) MLMODEL 创建和运行线程安全的对象(object to create and run a thread-safe) ICLASSIFIER 对象在并发过程中成功.(object successfully in a concurrent process.)

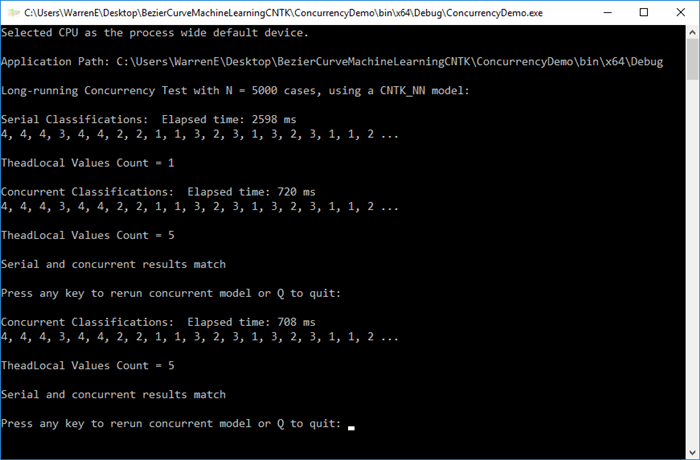

使用并发演示代码(Using the Concurrency Demonstration Code)

所以现在,我们来(So now, we come to the) ConcurrencyDemo 在眼前.下载并解压缩该项目(可能在桌面上).此步骤应导致一个名为(at hand. Download and unzip that project (maybe on the desktop). This step should result in a folder named)**并发演示(ConcurrencyDemo)**其中包含(which contains the)**并发演示(ConcurrencyDemo.sln)**文件以及其他文件.在Visual Studio中打开该解决方案文件.检查(file plus other files. Open that solution file in Visual Studio. Check the)**配置管理器(Configuration Manager)**确保(to insure that)**除错(Debug)**和(and)**发布(Release)**解决方案中所有项目的内部版本都设置为x64.接下来,建立两个(builds for all projects in the solution are set to x64. Next, build both the)**除错(Debug)**和(and the)**发布(Release)**版本.如果到目前为止一切顺利,请选择(versions. If all seems to have gone well so far, choose) ConcurrencyDemo 作为(as the) StartUp 项目并单击(project and click)开始(Start)生成并运行控制台应用程序.类似于以下图像的输出应出现在控制台窗口中:(to build and run the console application. Output similar to the image below should appear in the console window:)

该演示随附(The demo comes with the) ALGLIB 神经网络和决策森林类已被注释掉. (在(neural net and decision forest classes commented out. (The lines in the) MLMODEL 为了说明这些分类器的构造函数,也将其注释掉.)为了证明它们也可以成功并发运行,最后的设置步骤是扩展(constructor for these classifiers are also commented out.) To demonstrate that they also can be run concurrently successfully, a last setup step would be to expand the)**并发演示(ConcurrencyDemo)**项目文件夹位于(project folder in)解决方案资源管理器(Solution Explorer),右键单击(, right-click on the)**参考文献(References)**文件夹,然后使用(folder there, and use the)添加参考(Add References)选择添加(option to add the)**ALGLIB314.dll(ALGLIB314.dll)**库(您将在本系列的第2部分中构建)(library (which you would have built in Part 2 of this series) to the) ConcurrencyDemo 单击其复选框和"确定"按钮作为参考.然后取消注释评论中的部分(project as a reference by clicking its checkbox and the OK button. Then uncomment the commented sections in the)**ClassifierModels.cs(ClassifierModels.cs)**模块并更改(module and change the) Enum 的价值(value in the)**Program.cs(Program.cs)**模块.(module.)

兴趣点(Points of Interest)

这里的理念是,大多数从CodeProject"演示"中获得的好处应该来自对代码的检查.我们当然鼓励读者这样做(任何改进的建议将不胜感激).本文可能已经太久了,并且其中的代码(The philosophy here is that most of the benefit to be derived from a CodeProject “demonstration,” is supposed to come from examination of the code. Readers are certainly encouraged to do that (any suggestions for improvements will be appreciated). This article is probably too long already and the code in the)**程式(program.cs)**模块几乎是不言自明的.(module is pretty much self-explanatory.)

我们要做的是加载整个样本数据文件((What we do is load the entire sample data file () N=500 ) 使用() using the) DataReader 目的.一旦将其存储在内存中,为了娱乐,我们将其复制10次,并将其重新格式化为以下内容的字典(object. Once we have that in memory, for fun, we duplicate that 10 times, and reformat it as a dictionary of) double[] 值访问的(values accessed by an) ID 键.接下来,我们将创建一个本地流程,该流程将使用(key. Next we create a local process that will classify all of that data using a) MLMODEL 对于(for) CNTK 数据.有一个选项可以使这些"短时间运行"操作更实际地"长时间运行”.(data. There is an option to make those “short-running” operations more realistically “long-running.”)

首先,我们运行并计时一次由在主线程上串行执行的简单循环重复调用的本地进程,并将结果((ID,分类)元组)保存在列表中.接下来,我们使用(First, we run and time this local process being called repeatedly by a simple loop executing serially on the main thread and save the results (an (ID, Classification) tuple) in a list. Next, we use a) Parallel ForEach ****循环运行并计时,将输出存储在(loop and run and time that, storing output in a) ConcurrentBag 我们最终将其排序(which we eventually sort by) ID 并存储在第二个列表中.最后,我们比较两个列表以确保它们完全匹配.当然,那是最重要的考验.(and store in a second list. Finally, we compare the two lists to ensure that they match perfectly. That, of course, is the most important test of all.)

从控制台输出中,我们可以看到串行和并行分类确实匹配,并且并发方法的运行速度快两倍.我们还可以注意到(From the console output, we can see that the serial and parallel classifications did match and the concurrent method was more than twice as fast. We also can notice that) ThreadLocal<T> 可以按预期工作,仅包含一个条目,并且仅使用一个模型副本进行串行处理,并且对于特定的并行处理运行(可能使用在4个逻辑处理器上运行的5或6个克隆模型). (请注意,有一个选项可以重复运行并发进程以查看资源如何变化.如果您有(worked as expected, containing only one entry and using just one model copy for serial processing and for that particular parallel processing run using perhaps 5 or 6 cloned models running on 4 logical processors. (Note there is an option for running the concurrent process repeatedly to see how resources change. If you have)**诊断工具(Diagnostic Tools)**打开,您可以看到每次重新运行并发进程时几乎都使用了100%的CPU处理器资源.)(open, you can see that almost 100% of CPU processor resources are used each time you rerun the concurrent process.))

结论(Conclusion)

从该演示得出的主要结论是,使用各种机器学习技术训练的CNTK和其他模型可以在生产环境中安全地并行运行,可以直接调用它,也可以将其深度嵌入到某些(否则是线程安全的)数据处理循环中.(The principal conclusion drawn from this demonstration is that CNTK and other models trained using various machine learning techniques can be run concurrently safely in production environments, either directly called or deeply embedded in some (otherwise thread-safe) data processing loop.)

历史(History)

- 1个(1)圣(st)2018年10月:1.0版(October, 2018: Version 1.0)

许可

本文以及所有相关的源代码和文件均已获得The Code Project Open License (CPOL)的许可。

XML C# VS2013 text machine-learning VS2017 AI 新闻 翻译